Higher Education Reform

Higher Education Reform Report: Metrics Not Mature Enough to Replace Peer Review

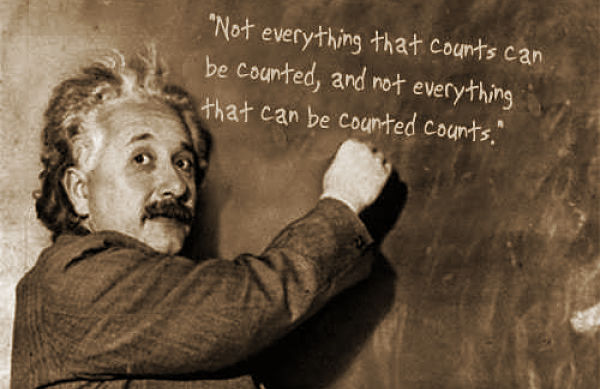

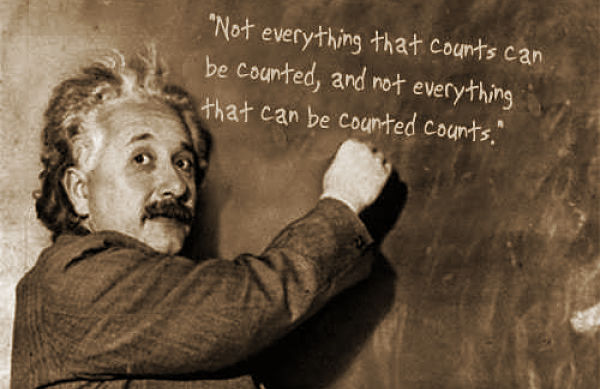

OK, it was actually sociologist William Bruce Cameron who said this, but it’s still a message ‘The Metric Tide’ authors might endorse.

“Citations, journal impact factors, h-indices, even tweets and Facebook likes – there are no end of quantitative measures that can now be used to try to assess the quality and wider impacts of research. But how robust and reliable are such metrics, and what weight – if any – should we give them in the future management of research systems at the national or institutional level?”

So states the just-released report – “The Metric Tide” — from a blue-ribbon British committee that set out to answer this question and lay out recommendations for “responsible” use of academic metrics going forward. Their key message: Metrics cannot replace peer review in the next Research Excellence Framework, the current system for “assessing the quality of research in UK higher education institutions” (and thus determining how government’s annual £1.6 billion of research funding is distributed).

David Willetts, then the UK minister for universities and science, announced the review in April of last year, and the Higher Education Funding Council for England, or HEFCE, supported the 15-month gestation period. A 12-member steering committee of experts drawn from scientometrics, research funding, research policy, publishing, university management and research administration joined chair James Wilsdon, a professor of science and democracy at the University of Sussex, in preparing the report and setting up the www.ResponsibleMetrics.org blog as a convening place to discuss these issues going forward.“A lot of the things we value most in academic culture,” explained James Wilsdon, the professor of science and democracy at the University of Sussex who chaired the review, “resist simple quantification, and individual indicators can struggle to do justice to the richness and diversity of our research.”

Nonetheless, while the review found metrics currently unsuitable for completely subsuming assessment by humans in the REF, the review made 20 recommendations for improving how higher education and those in the penumbra of academe generate and deploy metrics going forward. The recommendations range from reducing the emphasis on the journal impact factor as a promotional tool and being more transparent about how university rankings are generated to creating an annual “Bad Metric” prize to be awarded to the most inappropriate use of quantitative indicators in research management.

That some sort of data-driven assessment might replace human peer review gained steam after the final Research Assessment Exercise (which the REF replaced) in 2008. As one example, in 2011 Patrick Dunleavy, professor of political science and public policy at the London School of Economics, in an article that tagged the REF as “lumbering and expensive,” wrote that a “digital census” could replace the “giant fairytale of ‘peer review,’ one that involves thousands of hours of committee work by busy academics and university administrators, who should all be far more gainfully employed.”

And what does this exercise produce, he asked? “The answer is hardly anything of much worth – the whole process used is obscure, ‘closed book’, undocumented and cannot be challenged. The judgements produced are also so deformed from the outset by the rules of the bureaucratic game that produced it that they have no external value or legitimacy.”

Dunleavy later walked back a few of his criticisms, and predicted (correctly) that his recommendations wouldn’t be used in the 2014 REF, but that the path forward lay in the land of metrics. “… [T]he digital census alternative to REF’s deformed ‘eyeball everything once’ process is not going to go away. If not in [2014], then by 2018 the future of research assessment lies with digital self-regulation and completely open-book processes.”

This new report suggests 2018 might also be too soon, even as it supports the idea that “powerful currents [are] whipping up the metric tide,” such as audits of public spending, insistence on value for money, calls for better strategies in pursuing research, and the elephant in the room – the data is being generated anyway.

“Metrics touch a raw nerve in the research community,” Wilsdon acknowledged, adding that “it’s right to be excited about the potential of new sources of data, which can give us a more detailed picture of the qualities and impacts of research than ever before.” The report, however, is leery of accepting this bounty unskeptically. “In a positive sense, wider use of quantitative indicators, and the emergence of alternative metrics for societal impact, could support the transition to a more open, accountable and outward-facing research system. But placing too much emphasis on narrow, poorly-designed indicators – such as journal impact factors (JIFs) – can have negative consequences.” It cited 2013’s San Francisco Declaration on Research Assessment, which in particular blasted the cavalier use of impact factors as a proxy for academic quality.

Furthermore, the ability to “game” metrics, to pursue goals that are only logical in the context of generating good numbers, or to have a system that is open and understood by everyone in the assessment process, are litigate against replacing peer review right now.

The report notes that a trial effort using bibliometric indicators of research quality was evaluated in 2008 and 2009. “At that time, it was concluded that citation information was insufficiently robust to be used formulaically or as a primary indicator of quality, but that there might be scope for it to enhance processes of expert review.” Even with better numbers and smarter analysis, it’s still not time.

“Metrics should support, not supplant, expert judgement,” the report states. “Peer review is not perfect, but it is the least worst form of academic governance we have, and should remain the primary basis for assessing research papers, proposals and individuals, and for national assessment exercises like the REF.” And so the report calls for more data to mesh with the existing qualitative framework for the 2018 REF and the research system as a whole.

Among the report’s key recommendations:

- Leaders of higher education institutions should develop a clear statement of principles covering their approach to research management and assessment. Research managers and administrators should champion these principles within their institutions, clearly highlighting that the content and quality of a paper is much more important than the impact factor of the journal in which it was published.

- Hiring managers and recruitment or promotion panels in higher education institutions should be explicit about the criteria used for hiring, tenure, and promotion decisions, and individual researchers should be mindful of the limitations of particular indicators in the way they present their own CVs and evaluate the work of colleagues.

- The UK research system should take full advantage of ORCID as its preferred system of unique researcher identifiers; ORCID should be mandatory for all researchers in the next REF. As it explains on its website, “ORCID aims to solve the name ambiguity problem in research and scholarly communications by creating a central registry of unique identifiers for individual researchers and an open and transparent linking mechanism between ORCID and other current researcher ID schemes”.

- Further investment into improving the research information infrastructure is required. Funders and Jisc should explore opportunities for making additional strategic investments, particularly to improve the interoperability of research management systems.

- A Forum for Responsible Metrics should be established to bring together research funders, institutions and representative bodies, publishers, data providers and others to work on issues of data standards, interoperability, openness and transparency.