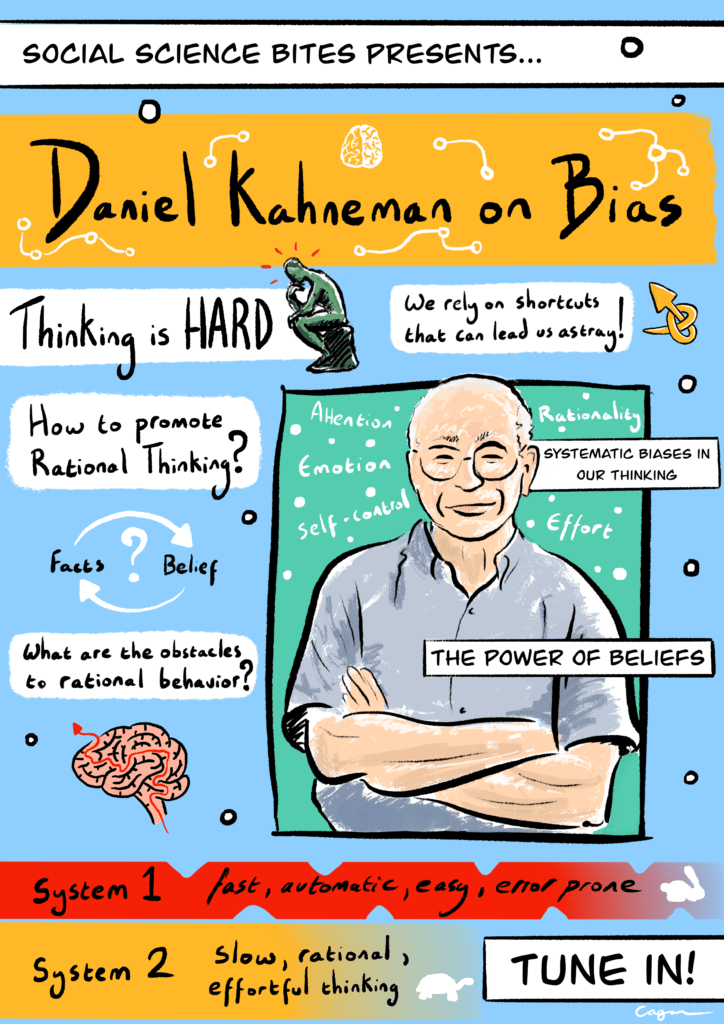

This illustration is part of a series of Social Science Bites illustrations by scientific illustrator Alex Cagan. We’ve looked through our archives and chosen some of our favorite episodes from over the years, which Alex has brought to life in these visualizations. We’ll be unveiling new illustrations in this series through June and July 2020 on our Twitter page. Catch each new illustration as it’s released at the hashtag #SSBillustrated and click here to view all the illustrations so far.

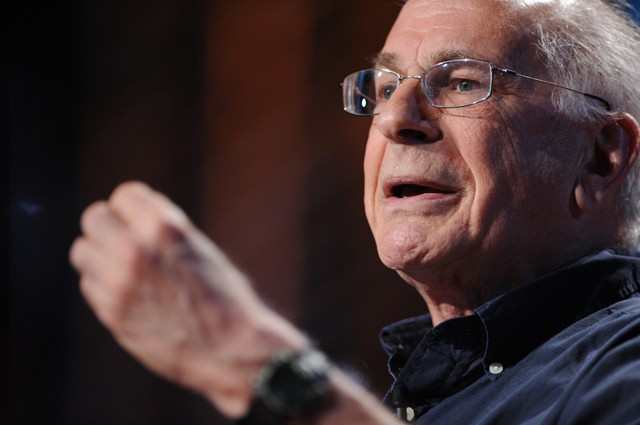

Thinking is hard, and most of the time we rely on simple psychological mechanisms that can lead us astray. In this episode of the Social Science Bites podcast, the Nobel Prize-winning psychologist Daniel Kahneman, author of Thinking, Fast and Slow, talks to Nigel Warburton about biases in our reasoning.

“I’ve called Daniel Kahneman the world’s most influential living psychologist and I believe that is true,” Steven Pinker (another Bites subject) said recently. “He pretty much created the field of behavioral economics and has revolutionized large parts of cognitive psychology and social psychology.”

Click HERE to download a PDF transcript of this conversation. The full text also appears below.

To directly download this podcast, right click HERE and “Save Link As.”

Social Science Bites is made in association with SAGE. For a complete listing of past Social Science Bites podcasts, click here.

***

David Edmonds: He may not have believed that he was doing Economics but the Nobel Committee disagreed. The Israeli-American psychologist Daniel Kahneman won the Nobel Prize in Economics for revolutionary work in Psychology that revealed that human beings are not the rational decision-makers that many economists had claimed that they were. In so doing, and alongside his collaborator Amos Tversky, he essentially founded the school of Behavioural Economics. Kahneman believes that there is a fast dimension to the mind as well as a slow, calculating one. Thinking fast is effective and efficient and often produces the right result but it can also lead us astray.

Nigel Warburton: Daniel Kahneman welcome to Social Science Bites.

Daniel Kahneman: Happy to be here.

Nigel Warburton: The topic that we are going to focus on is bias. Now you’re famous for talking about these two different systems of thought that we operate with: thinking fast and thinking slow. I wonder if you could just give a brief outline of those two ways of thinking.

This is true in politics, it is true in religion, and it is true in many other domains where we think that we have reasons but in fact we first have the belief and then we accept the reasons.

Daniel Kahneman: Well, there are really two ways that thoughts come to mind. So when I say ‘2 + 2’ the number 4 comes to mind, and when I say ‘17 x 24’ really nothing comes immediately to mind – you are generally aware that this is a multiplication problem. The first kind of thinking, which I associate with System 1, is completely associative, it just happens to you, a thought comes to mind as it were spontaneously or automatically. The second kind of thinking, the one that would produce an answer to the question by computation: that is serial, that is effortful. That is why I call it System 2 or slow thinking.

Nigel Warburton: But this isn’t just in the area of mathematics, this is more broad than that.

Daniel Kahneman: Well certainly. System 1 is defined really as anything happens automatically in the mind that is without any sense of effort, and usually without a sense of authorship. So it could be an emotion, an emotion is a System 1: tt is something that happens to you, it isn’t something you do. In some cases it could be even an intention: a wish to do something which you feel is something that happens to you. Now the domain of System 2 is that when we speak about System 2, we speak about effortful thinking, if you will, and that includes not only computation and reasoning, but it also includes self-control. Self-control is effortful. And so anything that demands mental effort tends to be classified as System 2 or slow thinking.

Nigel Warburton: So these two systems operate almost in collusion with each other, is that what you’re suggesting?

Daniel Kahneman: Well, in the first case I should make it clear that I do not really propose that they are two systems with individual locations in the brain. This is more of a metaphor to describe how the brain works, or the mind works. And, indeed, what happens to us, and what we do, and how we think involves both systems almost always. System 1, I propose, is invariably active: ideas and thoughts and emotions come to mind through an associative process all the time. And System 2 has a control function: we don’t say anything that comes to mind, and it has in addition to the computational function it can inhibit thoughts from being expressed; it controls action and that is effortful. And it’s the interaction between System 1 and System 2 that in effect, in the story that I tell, defines who we are and how we think.

Nigel Warburton:And one aspect of that is that we have systematic biases in our fast thinking.

Daniel Kahneman: Well, one characteristic of System 1 or the automatic thinking is that something comes to your mind almost always – appropriate or not. Whenever you’re faced with a question or a challenge very likely something will come to your mind. And quite often what comes to your mind is not sm answer to the question that you were trying to answer but it’s an answer to another question, a different question. So this happens all the time: I ask you how probable something is and instead of probability what comes to your mind is that you can think of many instances, and you will rely on that to answer the probability question; and it is that substitution that produces systematic biases.

Nigel Warburton: So anecdotal evidence takes over from what you might see as social science in a way, the idea of some kind of systematic analysis of likelihood of events.

Daniel Kahneman: That is right. We rely on systematic thinking much less than we think we do. And indeed much of the time when we think we are thinking systematically, that is when we think we have a reason for our conclusions, in effect the conclusions are dictated by the associative machinery. They are conclusions produced by System 1, in my terminology, which are then rationalized by System 2. So much of our thinking involves System 2 producing explanations for intuitions or feelings that arose automatically in System 1.

Nigel Warburton: I wonder if you could give a specific example of that.

Daniel Kahneman: Well when people are asked a political question ‘What are you in favor of?’ we always are quite capable of producing rationalizations or stories about the reasons that justify our political beliefs. But it’s fairly clear that the reasons are not the causes of our political beliefs, mostly. Mostly we have political beliefs because we belong to a certain circle, people we like hold those beliefs, those beliefs are part of who we are. In the United States for example, there is a high correlation between beliefs about gay marriage and beliefs about climate change. Now it’s very unlikely that this would arise from a rational process of producing reasons: it arises from the nature of beliefs as something that is really part of us.

Nigel Warburton: When you’re investigating these patterns of thought what sort of evidence can you use to support your conclusions?

Daniel Kahneman: Well the evidence is largely experimental. I can give you an example if that would help.

Nigel Warburton: That would be great.

Daniel Kahneman: So this is an experiment that was done in the UK, and I believe that Jonathan Evans was the author of the study, where students were asked to evaluate whether an argument is logically consistent – that is, whether the conclusion follows logically from the premises. The argument runs as follows: ‘All roses are flowers. Some flowers fade quickly. Therefore some roses fade quickly.’ And people are asked ‘Is this a valid argument or not?’ It is not a valid argument. But a very large majority of students believe it is because what comes to their mind automatically is that the conclusion is true, and that comes to mind first. And from there the natural move from the conclusion being true to the argument being valid. And people are not really aware that this is how they did it: they just feel the argument is valid, and this is what they say.

Nigel Warburton: Now in that example I know that the confusion between truth and falsehood of premises and the validity of the structure of an argument that’s the kind of thing which you can teach undergraduates in a philosophy class to recognize, and they get better at avoiding the basic fallacious style of reasoning. Is that true of the kinds of biases that you’ve analyzed?

Daniel Kahneman: Well, actually I don’t think that that’s true even of this bias. The thinking of people does not increase radically by being taught the logic course at the university level. What I had in mind when I produced that example is that we find reasons for our political conclusions or political beliefs, and we find those reasons compelling, because we hold the beliefs. It works the opposite of the way that it should work, and that is very similar to believing that an argument is valid because we believe that the conclusion is true. This is true in politics, it is true in religion, and it is true in many other domains where we think that we have reasons but in fact we first have the belief and then we accept the reasons.

Nigel Warburton: That’s really interesting. That echoes something Nietzsche said in Beyond Good and Evil: he suggested that the prejudices of philosophers are really a kind of rationalization of their hearts’ innermost desire: it’s not as if reason leads you to the conclusion, it just looks that way to the philosopher.

Daniel Kahneman: And you know it looks that way to us. That is, most of the time we do believe that we have reasons for whatever we say even when in fact the reason is almost incidental to the fact that we hold a belief.

Nigel Warburton: When you say ‘we’, does that apply universally? Is this a sense of the human predicament or is it something about Western thinking…?

Daniel Kahneman: Well, I believe it’s a human predicament. There are substantial cultural influences over the way that people think, but the basic structure of the mind is a human universal.

Nigel Warburton:Do you see yourself as a social scientist in this research?

Daniel Kahneman: Oh yes. I see myself as a social scientist because psychology’s classified among the social sciences — in the social sciences and broadly the human sciences.

Nigel Warburton: One aspect in the social sciences is that the experimenter is in a sense part of the thing that is being investigated while investigating. So it is very difficult to be entirely objective in the way that you might aim at in the hard sciences.

Daniel Kahneman: I do not recognize this difficulty, actually, in psychological experimentation. My late colleague Amos Tversky and I, actually we always started by investigating our own intuitions. But then you can, in a quite a rigorous and controlled way, demonstrate that the intuitive system of other people functions in the same way. I mean, we can reach the wrong conclusions – that I believe is probably true of other sciences as well.

Nigel Warburton: I guess your research might suggest you would reach some wrong conclusions. If everybody’s prone to bias of a systematic kind, then you yourself wont be immune from that.

Daniel Kahneman: Well undoubtedly there is bias in science, and there are many biases, and I certainly do not claim to be immune from them. I suffer from all of them. We tend to favor our hypotheses. We tend to believe that things are going to work, and sometimes we delude ourselves in believing our conclusions. But the discipline of science is that in principle there is evidence that other people are able to evaluate, and most of the time I believe the system works. I do believe that in that sense psychology is a science.

Nigel Warburton: So would it be fair to summarize what you were saying earlier that your kind of Psychology is not distinctively different in approach from the physical sciences: there’s a sense in which it suffers from the same kinds of issues about bias that other sciences do, but there’s not a strict division between the hard sciences, as it were, and the social sciences.

Daniel Kahneman: No, I really do not think that there is an essential difference. You know, we operate in basically the same way,that is we have hypotheses, and then we design experiments, and the experiments are conducted objectively and with methods that make them mostly repeatable, and then we have the results, and we show the results, and they do or they do not support the conclusions. So the basic machinery of science is present.

Nigel Warburton: Well, some people would say that the big difference is that with Psychology you are dealing with human beings. Human beings are themselves conscious of being experimented on, as it were, and that can affect the results.

Daniel Kahneman: Well, we are fully aware as experimenters of this problem. There is even a technical term for it: we call those ‘demand characteristics’ or ‘demand effects’, that is, that an experimental situations has a suggested effect. It suggests certain behaviors and has an impact on people’s behavior. In the experimental situation we try to minimize the effects of such experimental biases, but we typically will search for validation of results in another domain. So, for example, we would look for manifestations of similar biases in the conduct of people over whom we have no control outside the laboratory, in the judgements of judges, or juries, or politicians, or so on. And if we find similar biases, that is important conformation that our theory is more or less correct.

Nigel Warburton: You must be aware that your research has sent ripples out into all kinds of areas, including policy areas. Are you happy about that?

Daniel Kahneman: Oh yes. I happen to believe that psychology does have implications for policy because if people are biased, if their decision-making is prone to particular kinds of errors, then policies that are designed to help people avoid these errors are recommended. Indeed, this is happening in the UK, and it’s also, to a significant extent, is beginning to happen in the United States as well.

Nigel Warburton: So what would you see as the most important practical implication of research that you’ve done?

Daniel Kahneman: Well, there is a field in economics called behavioral economics which sometimes is not all that different from social psychology and which has been strongly influenced that Tversky and I and others have done. And I think still the most important implication is in the domain of savings. My friend and colleague Richard Thaler devised a method that actually changes in the United States the amount that people are willing to save to a very substantial extent. And there have been many other applications where you can change behavior by changing minor aspects of the situation.

Nigel Warburton: That’s interesting. So is there a sense in which you are more likely to have an effect through some kind of imposed framework that encourages you to behave in a certain way than you are by reflecting on your own practices and your own biases?

Daniel Kahneman: Oh, certainly. I will give you an example. There are differences in different countries in Europe in how the form for declaring you’re intent to donate or not to donate your organs in case of accidental death. And in about half the countries the default choice is that you do contribute your organs, but you can check a box that indicates that you do not wish to contribute your organs. And in other countries the default is that you do not contribute your organs, and you have to check a box if you wish to contribute. And the differences in the rate of donation, it’s roughly between 15 percent and 90 percent. That’s an example where behavior is clearly not controlled by reasons: it is controlled by the immediate context and the immediate situation.

Nigel Warburton: Do you find that some people are threatened by what you are saying, because what you seem to be doing is providing experimental evidence of human irrationality in all kinds of areas, and many people have made a career out of saying that reason is what human beings do best, that’s what sets us apart from other animals?

Daniel Kahneman: Well, you can say that reason is, some forms of reasoning, certainly, language-based reasoning, are uniquely human, without claiming that all our behavior is dominated or controlled by reason. We have a System 2, and we are capable of using it.

Nigel Warburton: But why don’t we use System 2 more?

Daniel Kahneman: Because it’s hard work. A law of least effort applies. People are reluctant, some more than others, by the way, there are large individual differences. But thinking is hard, and it’s also slow. And because automatic thinking is usually so efficient, and usually so successful, we have very little reason to work very hard mentally, and frequently we don’t work hard when if we did we would reach different conclusions.

Nigel Warburton: But is there nothing we can do to mitigate the effects of dangerous fast thinking?

Daniel Kahneman: Well, there is to some extent. The question here is, who is ‘we’? As a society when we provide education we are strengthening System 2; when we teach people that reasoning logically is a good thing, we are strengthening System 2. It is not going to make people completely rational, or make people completely reasonable, but you can work in that direction, and certainly self-control is variable: some people have much more of it than other people, and all of us exert self-control more in some situations than in others. And so creating conditions under which people are less likely to abandon self-control, that is part of promoting rationality. We are never going to get there, but we can move in that direction.

Nigel Warburton: So is your own research part of a project, in a sense, to strengthen System 2?

Daniel Kahneman: Oh yes. People are interested in promoting rational behavior. They can be helped I presume by analyzing the obstacles to rational, reasonable behavior, and trying to get around those obstacles.

Nigel Warburton: Daniel Kahneman, thank you very much.

Daniel Kahneman: Thank you.

Social Science Bites

Welcome to the blog for the Social Science Bites podcast: a series of interviews with leading social scientists. Each episode explores an aspect of our social world. You can access all audio and the transcripts from each interview here. Don’t forget to follow us on Twitter @socialscibites.

View all posts by Social Science Bites

Hi,

I was wondering if you had plans to setup an rss feed so people can download the podcasts using free software? e.g. gpodder

My partner and I are both linux users, so itunes is not an option for us. If it’s any help I volunteer myself to help set this up for you!

Kind Regards

Kevin

Hi Kevin,

Thanks for alerting us to the problem for linux users! A direct link to the podcast RSS is http://socialsciencebites.libsyn.com/rss

Best,

Mithu (on behalf of the socialsciencespace team)