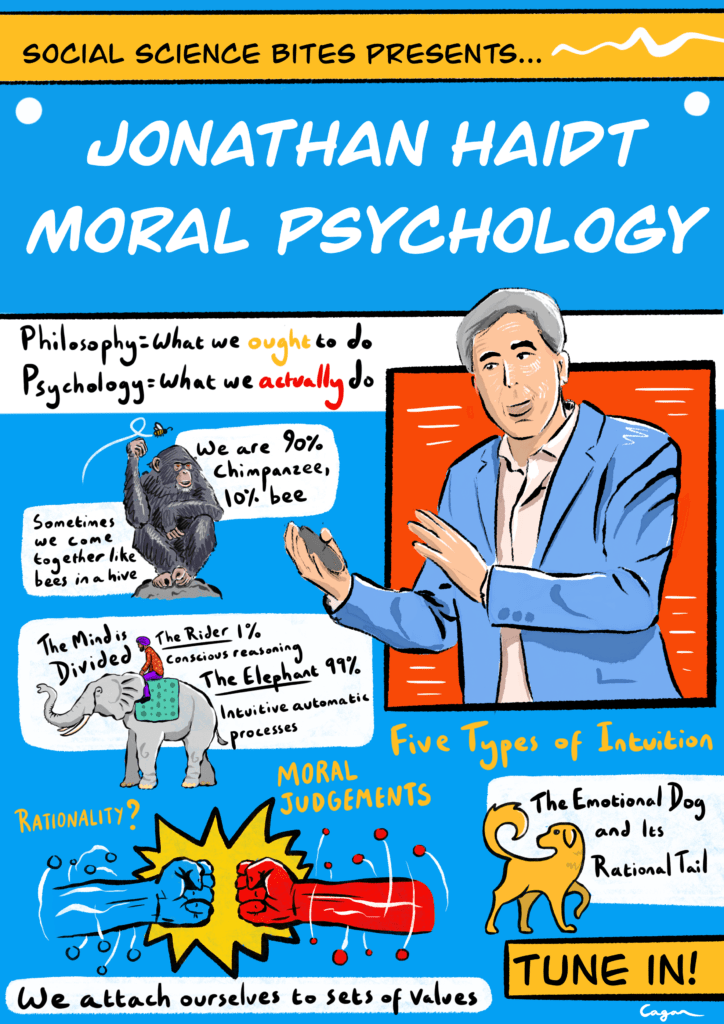

This illustration is part of a series of Social Science Bites illustrations by scientific illustrator Alex Cagan. We’ve looked through our archives and chosen some of our favorite episodes from over the years, which Alex has brought to life in these visualizations. We’ll be unveiling new illustrations in this series through June and July 2020 on our Twitter page. Catch each new illustration as it’s released at the hashtag #SSBillustrated and click here to view all the illustrations so far.

What can psychology tell us about morality? Jonathan Haidt, author of The Righteous Mind, discusses the place of rationality in our moral judgements in this episode of the Social Science Bites podcast.

Click HERE to download a PDF transcript of this conversation. The full text also appears below.

To directly download this podcast, right click HERE and “Save Link As.”

Social Science Bites is made in association with SAGE. For a complete listing of past Social Science Bites podcasts, click here.

***

David Edmonds: Abortion, capital punishment, euthanasia, free speech, marriage, homosexuality: topics on which liberals and conservatives take radically different views. But why do we adopt certain moral and political judgements? What factors influence us? Is it nature or nurture? Are we governed by emotion or reason? Jonathan Haidt, a psychologist and best-selling author, most recently of The Righteous Mind, was formerly a staunch liberal. His research has now convinced him that no one political persuasion has a monopoly on the truth.

Nigel Warburton: Jonathan Haidt, welcome to Social Science Bites.

Jonathan Haidt: Thank you, Nigel.

Nigel Warburton: The topic we’re going to focus on today is moral psychology. Now, morality is normally liked with philosophy departments, not psychology departments. What is moral psychology?

Jonathan Haidt: Well, philosophers are certainly licensed to help us think about what we ought to do, but what we actually do do is the domain of psychologists, and just as you can talk about a linguistic psychologist, or sexual psychologist, we study all different aspects of human nature: morality, moral judgement, moral behavior, hypocrisy, righteousness. These are major, major topics of huge importance to our political lives, and our common lives.

What you think about abortion, gay rights, whether a single mother is as good as a married couple as parents, all of these things tie you to your team, and if you change your mind, you are now a traitor.

Nigel Warburton: And as a psychologist, presumably this involves experimentation, or at least observation?

Jonathan Haidt: Well, that’s right. It involves the use of scientific methods which don’t have to be experimental: we can do observation and correlation. As with any difficult area to study, you want to use a lot of different methods, and there’s no substitute for tuning up your own intuitions, I think, we’re doing some field work, for reading widely, for talking to people who have varying moral world views. So, in that sense it can be a little bit like anthropology.

Nigel Warburton: And in recent years there have been interesting developments, because fMRI scans have actually been available to allow us to get a glimpse of what’s going on physiologically when people are making decisions.

Jonathan Haidt: That’s right. So the short thing to say about it is ‘Wow, it’s in the brain, the brain actually makes us do moral judgements’. The more interesting thing to say about it is ‘Huh! Look at which areas of the brain are particularly active’. And it turns out that from Josh Greene’s original study, and Antonio Damasio’s before that in the ‘nineties, that the emotion areas play a very large role, and the reasoning areas sometimes take a long time to come in. One of the big topics of debate right now is how do you put those together? The fact that we reason logically, we feel emotions, the insula fires when we’re disgusted. I’m on the side that says the two different emotional reactions tend to drive the reasoning reactions, and I think most of the neuroscience literature is consistent with that.

Nigel Warburton: Most of us like to think that when we make a moral decision, it’s somehow a rational decision, it’s not just a gut instinct. Are you saying that’s a kind of self- deception?

Jonathan Haidt: Well, yes. We judge right away. I mean, this is one of the big movements in social psychology, what’s called The Automaticity Revolution. It goes back to Wilhelm Wundt 120 years ago, people pointing out that within the first quarter of a second, we react to people’s faces, we react to words, we react to propositions, and then reasoning is much slower. Robert Zajonc, a very well-known social psychologist, argued in the 1980s that ‘preferences need no inferences’, that our minds react as to aesthetic objects, and then that constrains the nature of our reasoning. What we’re really, really bad at is saying ‘Ok, what’s all the evidence? Let me size it all up and see which way it points.’ We’re terrible at that. What we’re really good at is saying ‘Here’s the hypothesis I want to believe, let me now see if I can find evidence, and if I can’t find any evidence, alright, I’ll give it up. But wouldn’t you know it, I’m pretty much always able to find some evidence to support it’.

Nigel Warburton: So, what you’re saying is that moral reasoning is really just rationalisation on the whole?

Jonathan Haidt: For the most part, when we are doing moral reasoning about anything that is vaguely relevant to us. Some people think that I deny that rationality exists, and no, not at all. You know, we’re able to reason about all sorts of things. I mean, if I want to get from point A to point B, I’ll figure it out, and then if somebody gives me a counterargument and shows me that, no, it’s faster to go through C, I’ll believe him. But moral judgements aren’t just about what’s going on in the world. Our morality is constrained by so many factors; one of the main ones is our team-membership. And so political disagreements have a rather notorious history of being completely impervious to reasons given by the other side, which then makes the other side think that we are not sincere, we’re not rational, and both sides think that about each other. Because what you think about abortion, gay rights, whether a single mother is as good as a married couple as parents, all of these things tie you to your team, and if you change your mind, you are now a traitor, you will not be invited to dinner parties and you might be called some nasty names.

Nigel Warburton: Now, what’s the evidence for the claim that most of our moral reasoning isn’t actually rationally based?

Jonathan Haidt: Well, my own research. I mean, what got me into this was in graduate school, I was reading a lot of ethnography and how morality varies across cultures, and in culture after culture, they had all these rules, all these moral rules – about the body, about menstruation and food taboos – and I was reading the Old Testament, and the Qur’an, and all these books, and so much of our morality is visceral, it’s kind of hard to justify in a cost- benefit analysis. Alright, utilitarians come along and say ‘Well, actually, they’re just wrong, really morality is about human welfare, and all we have to do is maximize human welfare’, and if most people were intuitive utilitarians, then I think you could say that, but what you find is that people are not naturally utilitarians. Again this is not to say utilitarianism is wrong, I’m just saying that people have a lot of moral intuitions, and experiments on persuasion show it’s very hard to persuade people.

My own research involved giving people scenarios that were disgusting, or disrespectful, but had no harm – things like a family that eats their pet dog after the dog was killed by a car in front of their house – and on that scenario, the Ivy League undergraduates did generally say that it was OK, if they chose to do that, it was OK. So there was one group that was rational utilitarian in that sense, or rights-based I suppose you would also say. But the great majority of people, especially in Brazil and especially working class in both countries, said ‘No, it’s wrong, it’s disrespectful, there’s more to morality.’ So just descriptively most people have a lot of moral intuitions: they’re not utilitarians. When you interview them about these, or if you do experiments where you manipulate intuitions, you can basically drive their reasoning to follow the intuitions.

Nigel Warburton: And I know you’ve divided the kinds of intuitions they have into five categories. Would you mind just sort of recapping on those?

Jonathan Haidt: Sure. What really struck me when I was reading all this ethnography and when I spent three months doing research in India, is the degree to which certain things are so recognizably similar all around the world, yet the final pattern of a morality is so unique and so variable. What are the things where there’s good reason to think that there’s some evolutionary basis for this, and the most obvious being reciprocity? I mean, Robert Trivers wrote that famous article on reciprocal altruism. Boy, you know, if someone wants to claim that fairness and reciprocity is socially constructed entirely and learned, because our parents tell us to share. Come on, I mean, that is just ridiculous! Same thing with caring for vulnerable offspring. I mean, we’re mammals, you know! We’ve got all this mammalian stuff in us. So you start with those two and say ‘Innativism has got to be right to some extent about those, but then let’s keep going.’ And the ones that my colleagues and I added are group loyalty, we’re very good at coalitions, there’s respect for authority, the need to maintain order within groups, and then the fifth one is sanctity and purity, the idea that the body is a temple, and this is the one, I think, that you can kind of think of, almost, a dimension of social cognition. I informally call it the Singer-Kass dimension, you’ve got Peter Singer at one end saying ‘All that matters is the consequences for suffering’, and Leon Kass at the other saying ‘Shallow are the souls who have forgotten how to shudder’. Well, most people are a lot closer to Kass than Singer. Again this is just descriptive, not normative. So those are the five that we feel most confident about, but there are many more. Nowadays we think liberty is different from the others. I think, in the future we’re going to find that property, or ownership, is a moral foundation, you see it all over the animal kingdom with territoriality, and there’s some brand new research from several labs, showing that children at the age of two, or three, really, really notice and care about property and ownership and what’s in someone’s hand versus not in their hand – so I think there are a lot of moral foundations.

Nigel Warburton: One of the interesting insights in your research was the way that, politically, liberals and conservatives are attached to different sets of values.

Jonathan Haidt: Right, that was not my intent originally. I was trying to figure out how culture varied across countries, especially India versus the U.S, and what I was finding in my early work was actually that social class was even bigger than nations sometimes: that’s what I set out to study, and that’s how my colleagues and I came up with this list of moral foundations. So, there I am, doing my moral research, and the Democrats lose in 2000, and then, alright, maybe that was a fluke, and then they lose again in 2004, and I have just had it. I was a fairly strong liberal back then: I really disliked George Bush. So I basically wanted to use my research to help the Democrats get it, to help them connect with morality, because George Bush was connecting, and Gore and Kerry were not. So when I was invited to give a talk to the Charlottesville Democrats in 2004, right after the election, I said ‘Alright, well let me take this cross-cultural theory that I’ve got, and apply it to Left and Right, as though they’re different cultures.’ And boy, it worked well! I expected to get eaten alive: I was basically telling this room full of Democrats that the reason they lost is not because of Karl Rove, and sorcery and trickery, it’s because Democrats, or liberals, have a narrower set of moral foundations: they focus on fairness and care, and they don’t get the more groupish or visceral, patriotic, religious, hierarchical values that most Americans have.

Nigel Warburton: That seems to imply that you’re helping Democrats to be playing to virtues or types of moral thinking that don’t come naturally to them. It’s almost as if you’re suggesting they should be insincere in the way that they put themselves across.

Jonathan Haidt: Right, well when I first got into this, I was thinking ‘I just want the Democrats to win’, it was an open question, whether the advice would be that they should assume a virtue if they have it not. But as I went on, over and over again trying to explain conservatives to liberals, and taking this perspective that you need to tune up your intuitions, you need to treat this like ethnography and field work, you know, I would read everything I could, I subscribed to cable TV so I could get Fox News, and I would watch Fox News shows, and at first it was kind of offensive to me, but once I began to get it, to see ‘Oh I see how this interconnects’ and ‘Oh, you know if you really care about personal responsibility, and if you’re really offended by leeches and mooches and people who do foolish things, then want others to bail them out, yeah, I can see how that’s really offensive, and if you believe that, I can see how the welfare state is one of the most offensive things ever created’. So, I started actually seeing, you know, what both sides are really right about: certain threats and problems. And once you are part of a moral team that binds together, but it blinds you to alternate realities, it blinds you to facts that don’t fit your reality. So, as I was writing Chapter 8 of The Righteous Mind, where I tried to explain conservative notions of fairness and liberty, and I handed it to my wife to edit. I told her that I couldn’t call myself a liberal anymore, because I really thought both sides are deeply right about different issues.

Nigel Warburton: That’s really interesting. So the connection between your empirical research, which, like most social science research, aims at a certain degree of neutrality, and your own personal political beliefs was pretty intimate.

Jonathan Haidt: That’s right. If you’re studying morality, it’s kind of like you’re studying the operating system of our social life, and since the operating system of academe is liberal, is very liberal, I was enmeshed in the liberal team, and you know, as I said, my goal here was to help my team win. I wasn’t trying to pervert my science, but I was trying to use it as an activist would. You know, we have a lot of debate in social psychology, whether it’s OK to be activist, because we have a lot of social psychologists who are activists especially on race and gender issues, and most people think that’s ok. But I’ve come to think it’s not. Once you become part of a team, motivated reasoning and the confirmation bias are so powerful that you’re going to find support for whatever you want to believe. I mean, I’d like to think that my research, eventually, helped me get out of my team and be a free agent.

Nigel Warburton: Do you want to generalize from your own experience? Or are you saying that social scientists ought to remain aloof from politics?

Jonathan Haidt: Well, practically speaking, what I’m saying is that if you are a partisan, you are not going to process reality evenly. Science doesn’t require that we all be neutral and even-handed. The way science works, the reason why it works so brilliantly, is not because scientists are so rational, it’s because the institution of science guarantees that whatever we say is going to be challenged. So as long as we have a working intellectual marketplace, as long as there’s somebody to take the other side of a bet, someone to try to refute what we’re saying, someone to at least formulate what went wrong with what we did in peer review, then science can be full of biased people. The problem is, if everybody shares the same bias, there’s nobody on the other side, and it is guaranteed that the group will reach conclusions that are simply false. And that’s what’s happened, not on most issues, but on politically charged issues: race, gender and politics.

Nigel Warburton: One thing that’s noticeable in your work is the way you use metaphors. So, you’ve got a metaphor of a dog wagging a tail, or a tail wagging the dog, you’ve got the metaphor of the beehive. How important are metaphors for you?

Jonathan Haidt: If what I’m saying is right, and we are intuitive creatures that are not persuaded just by logic, things have to feel right first, and then we look for supporting evidence, and if it feels right and we see the evidence, then we believe. So, if I’m trying to persuade people and say ‘Look, here’s how the mind works, here’s how morality works’ I have to offer them, not just a whole list of experiments – every science book does that – but I have to give them some metaphors to help them accommodate, to help them change their mental structures, and then have a place to put all these experiments that I then summarize. So, my first big review article, published in 2001 in the Psychological Review, was titled ‘The Emotional Dog and Its Rational Tail.’ I was trying to make the case, based mostly on a review of the literature, not my own research at that point, that intuitions drive reasoning, not the other way around. So that’s a metaphor that I put out there in the title of my paper, and that seemed to stick: a lot of people seemed to gravitate to that. The second metaphor that I developed that’s gotten some currency was for The Happiness Hypothesis. I developed the metaphor that the mind is divided into parts, like a rider on an elephant, where the rider is the conscious reasoning verbal-based processes, one or two percent of what goes on in our heads, and the elephant is the other ninety-nine percent, the intuitive automatic processes which are largely invisible to consciousness. That’s the best metaphor I ever developed: I hear from people all the time ‘Oh yeah, I read your book, don’t remember anything about it, but man, that metaphor, that stuck with me forever, and I use it in my psychotherapy practice.’ And then in The Righteous Mind I’ve added a few more metaphors, but one is the idea of hive psychology: that we human beings are products of individual- level selection, just like chimpanzees, that makes us mostly selfish, and we can be strategically altruistic, but, we have this weird feature, which is that under the right circumstances, we love to transcend ourselves, our self-interest, and come together like bees in a hive. These are some of the best times in our lives, these are incredibly important politically, in terms of people joining causes and rallies. So, the metaphor that I developed in The Righteous Mind is that we are ninety percent chimp, and ten percent bee.

Nigel Warburton: Now, you’re a psychologist by training, which is… psychology is usually thought of as part of the social sciences. Is that how you see yourself, as a social scientist?

Jonathan Haidt: Yes, well I study morality, I identify as a social psychologist. But because I focus on a topic from multiple perspectives, so I find that some of the best things I’ve read have been by historians, economists, anthropologists, philosophers, especially those philosophers who have been reading empirical literature. I guess I think of myself as a social scientist, almost as much as I think of myself as a social psychologist.

Nigel Warburton: And is there something distinctive about being a social scientist, as opposed to being a scientist, or a philosopher, as it were?

Jonathan Haidt: Oh, yes. The natural sciences form a prototype of what the sciences are. If you’re studying rocks or quarks, and there’s this definitive experiment, and you design the experiment and you put it out there and you know, oh my god, the light rays bent, or they didn’t bend, and sure, they deserve to be the prototypes, it’s very clear, it’s easy to understand. But, you know, rocks and quarks are kind of dumb: they do exactly what the laws of physics tell them to. The social sciences are necessary because the things we study have this property of consciousness and intentionality. There are these emergent properties that rocks and quarks don’t have. Studying people and social systems requires a whole different set of tools and ways of thinking and you can’t get around understanding meaning. For the natural sciences, meaning is not a relevant concept, but it is unavoidable in social sciences.

Nigel Warburton: Jonathan Haidt, thank you very much.

Jonathan Haidt: Nigel, my pleasure.

Listen to other podcasts by Social Science Bites:

Robert Shiller on Behavioral Economics

Sonia Livingstone on Children and the Internet

Richard Sennett on Co-Operation

Rom Harré on What is Social Science?

Social Science Bites

Welcome to the blog for the Social Science Bites podcast: a series of interviews with leading social scientists. Each episode explores an aspect of our social world. You can access all audio and the transcripts from each interview here. Don’t forget to follow us on Twitter @socialscibites.

View all posts by Social Science Bites

This Jonathan is cool! I will turn 60 at the end of the year 2014. I recently met a very educated and intelligent woman from India. She was running for a School Board position. I complained about schools being run from Washington versus by local and state standards. She agreed. I mentioned that people have been trained to have blind faith in what they are taught and to give homage to their leaders. She agreed. Then I told her that I blamed the religions for this problem of blind faith. I explained that in normal Trinitarian doctrine, we have God… Read more »