In Swedish Repository, OA Citations Trump Non-OA

Here is the thing. Citations have become an obsession within the research community. And even though researchers, university administrators, research councils and journal editors probably all agree that citations by no means is a perfect and objective way of measuring research quality, the system is nevertheless very much practical and quite successful. Open Access (OA) is not about citations, nor is it about evaluating and measuring research. OA is about making knowledge freely available to researchers, teachers, students and the public around the world.

This article by Lars Kullman originally appeared on the LSE Impact of Social Sciences blog as “Across all fields, Open Access articles in Swedish repository have a higher citation rate than non-OA articles” and is reposted under the Creative Commons license (CC BY 3.0).

Why then care about citations rates on OA articles? Because citations are a language that researchers and university management understand. The assumption that open access leads to increased citations is widely spread among OA proponents. And proponents of this view have tended to be both passionate and argumentative. But what does it look like at Chalmers University of Technology?

Research on whether OA articles receive more citations than non-OA articles officially traces its origins back to 2001 when Steve Lawrence first published a paper indicating an OA citation advantage in the field of computer science. Since then numerous of studies have been made on the subject. The explanations from previous studies of an OA citation advantage can be summarized as: (1) A general OA advantage: more scholars have access to papers and these therefore receive more citations. (2) An early advantage: the earlier a paper is made available, the earlier it can start accumulating citations. (3) A selection bias / quality advantage: authors choose to self-archive their best papers, and better papers attract more citations.

Existing research on a possible OA citation advantage has used various different data sources and methodological approaches. Most studies have, however, compared citations to OA and non-OA papers published in the same journal or in a set of journals within a specific research field. This has been argued to be necessary due to differences in citation practice between scientific disciplines.

An alternative approach could be to use citation-based bibliometric indicators that normalize for such differences and thus allow meaningful cross-disciplinary comparisons of citation impact. Studies on a possible OA citation advantage utilizing field normalized citation data seem to be lacking, but could make an important contribution to this research as they are not limited to comparing likes with likes.

In this study, field normalized citation scores were combined with data on self-archiving from the university repository, Chalmers Publication Library (CPL), allowing for cross-field citation comparisons between OA and non-OA articles from Chalmers research publication output.

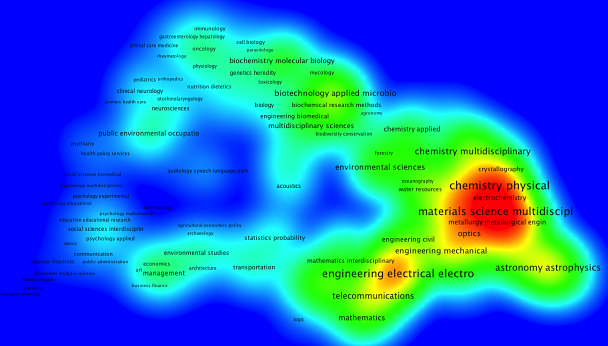

A density view of the subject fields in which Chalmers researchers publish showing the variety of subjects. The size of the text and the color of the cluster indicates size of the subject (more red = more publications).

In the study, ‘self-archived paper’ was used as a synonym to ‘OA article’, here defined as a full-text version of a paper freely available in CPL. No distinction was made between published articles (copies edited by the publisher) or final, i.e. accepted manuscripts.

In order to calculate mean normalized citation scores (MNCS), bibliographical data from CPL were matched with field normalized citation data from the Centre for Science and Technology Studies (CWTS) of Leiden University. The analysis from CWTS is based on the Web of Science data. In total, 3470 articles, published 2010-2012, were matched and out of those 899 were OA.

The study set out to investigate whether there is a possible OA citation advantage across all fields covered by articles published by Chalmers researchers. The results agree with many of the previous studies indicating such an advantage. The OA articles studied in this paper have a 22% higher field normalized citation rate than the non-OA articles, and the difference is statistically significant. There was a significant difference between the two groups when using field normalized data but not when using raw citations, which illustrates the importance of using field normalized citation data in this case.

What difference does it make if Chalmers articles have a field normalized citation rate of 1,23 compared to 1,01? To put it into a university rankings perspective it would make a difference of about 100 positions in THE World University Rankings for Chalmers.

It is said that it takes just one ugly fact to ruin a beautiful hypothesis, and the results from this study, with a high share of OA articles in e.g. the field of Astrophysics, points to the direction that these papers might also be published in arXiv as pre-prints. The logical assumption would be that papers published ahead of print have a longer window to gather citations and therefore will be cited more than papers not published as pre-prints. This early bias is also suggested to be the explanation to the OA citation advantage. An investigation of this was beyond the scope of this paper, but of course an interesting topic for future studies.

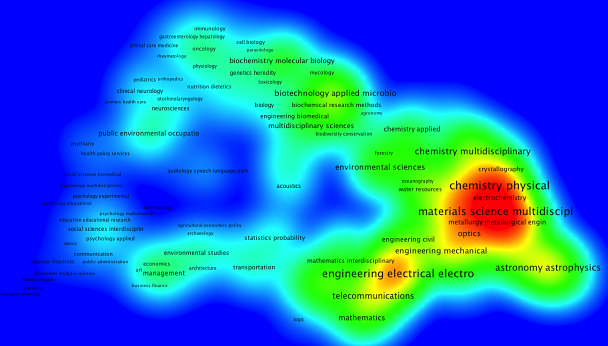

Density view over subjects where Chalmers researchers mostly publish OA. Strong correlation with fields that might publish preprints in arXiv.

The observed increase in citation rate for OA papers could arguably be caused by a self-selection bias, i.e. that authors choose to self-archive their best papers, rather than the OA availability per se. Chalmers has an OA mandate, but as the compliance level is only 25 percent, a self-selection bias cannot be ruled out.

Whilst this study has focused on the publications from just one university, a second theoretical contribution is that this study gives an example how make between field comparisons on the possible OA citation advantage using field-normalized citation data.

***

This post is based on the findings from The Effect of Open Access on Citation Rates of Self-archived Articles at Chalmers (2014).