The North-South Divide Appears in Metrics, Too

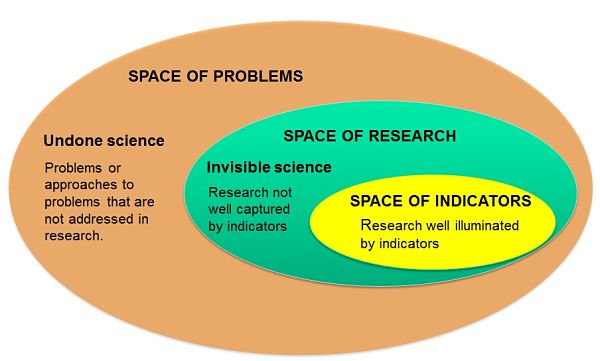

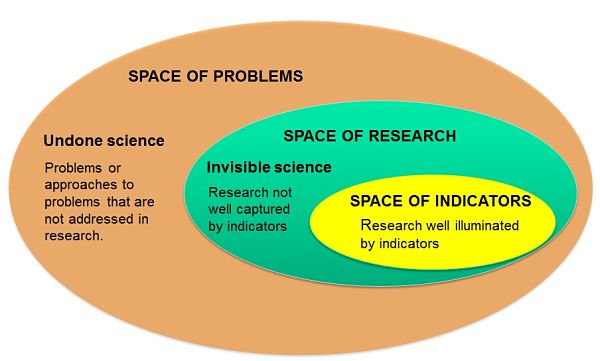

In many countries there is the perception that science could be better at helping address societal problems, such as climate change or obesity. Yet, paradoxically, some of the research activities that may help solve societal issues are not well reflected in the S&T indicators typically used by policy-makers. This is a serious problem because the way research is monitored influences what is valued in assessments and, consequently, the research agendas that are prioritized.

This article by Ismael Rafols and Jordi Molas-Gallart originally appeared on the LSE Impact of Social Sciences blog as “A call for inclusive indicators that explore research activities in “peripheral” topics and developing countries” and is reposted under the Creative Commons license (CC BY 3.0).

Why is there this gap or misalignment between conventional S&T indicators and some relevant research activities? Conventional S&T indicators work reasonably well to capture scholarly contribution and some forms of innovation in areas that have constituted the center of science, i.e. the natural sciences in rich countries. For example, in the US one can monitor contributions to molecular biology via citations, or commercial innovations in biotechnology by patents.

However, conventional S&T indicators are very problematic in “peripheral” spaces. These “peripheries” can be thought in geographical, cognitive or social dimensions. In geographical terms, developing countries have long been described as “the” periphery. Southern and Eastern European regions can also be conceived as EU peripheries, and less developed regions of a country as dependent on the cities where the financial and political power is located. The relative invisibility of certain disciplines or topics can be interpreted as a signature of cognitive periphery. This is the case of the social sciences and the humanities compared to medicine, or of epidemiology within health sciences. Research for marginalized social groups may also be seen as less “central” than research aligned with the interests of dominants institutions.

S&T indicators aim to capture relevant properties (e.g. innovative activity) by means of data or measures (e.g. patents). To do so, we need to have a model (a “theory”) of why the measure represents the desired property. For example, the use of patents counts (measure) as an indicator of innovative activity (property) is based on the assumption (theoretical model) that a patent is a trace of an innovation with potential socioeconomic value.

The problem is that in developing countries, in “softer” disciplines, or in many social innovations, the assumptions behind conventionally S&T indicators do not hold. In other words, in “peripheral” spaces the models often break down and the indicators lose their meaning: patents say little of innovation in Southern Europe or new cultivation practices; citation counts cannot be used to assess the value of an article on Valencian history.

When indicators are used beyond their range of validity, they provide a distorted perspective of the S&T system. When this distortion occurs, the use of indicators may cause more harm than good. Decision-makers may be influenced by the research that is illuminated by existing indicators and forget about the science that is not visible. This is a major issue, for example, in bibliometric analyses. The amount of publications from developing countries that are not covered in the conventional databases (Web of Science and Scopus) is very high. In the case of rice research, for example, the share of Indian and Chinese publications in WoS and Scopus is half the share of the observed in the more comprehensiveCAB Abstract database (specialised in agriculture). For the US, the situation is the reverse. Developing countries are thus heavily under-represented, and developed countries are over-represented in the conventional databases.

The uneven representation of databases is likely to induce serious biases in the standard bibliometric indicators, which are used for research assessment. Critical voices have been raised on the potential effects that these biases may have in shifting research contents away from locally relevant research. In order to address these concerns, specialised databases were created the last two decades to provide a better coverage of specific countries or topics – with the open access initiatives Scielo and Redalyc among the most prominent. Last year, the Leiden Manifesto advised to develop metrics using local data so as to “protect excellence in locally relevant research.” However, global science reports by organisations such as the UNESCO or the Royal Society continue to use Web of Science and Scopus’ data.

How can indicators be developed in such a way that they can capture S&T activities in a broader set of spaces? What policy processes are needed for these new indicators to become adopted in policy? The difficulty of answering these questions lies in the multiplicity of purposes and contexts in which S&T indicators need to be deployed. As suggested above, for indicators to be valid, a theoretical model is needed linking the research mission to be captured with the specific data to be measured. Given the variety of contexts, a responsible approach to metrics has to embrace diversity, as proposed by Hefce’s report The Metric Tide.

A call for inclusive S&T indicators is thus a call for for diverse approaches to capturing different types of science in different spaces. Next September, we will hold a conference, with the aim of discussing indicators and measurements of research and innovation in all sorts of spaces where research is now invisible: in developing countries, in innovation sites in poor regions or neighborhoods, in unconventional sectors. Old bibliometric tools, such as citation indicators will be asked to reveal the robustness of their insights under different conditions. The faith in the new analytics, such as altmetrics will be challenged to show how media attention is a proxy of social contribution in middle income countries. Deliberative processes for inclusive and participatory uses of indicators in disparate contexts will be proposed and questioned.

We aim to explore a large variety of S&T indicators and associated policy processes with the hope that this plurality will help make research more attuned to people’s of needs and desires. For science to help in making the world a better place, we have to improve radically how we measure research – in the global south, in the invisible disciplines and in topics that matter for the disenfranchised.