Can Artificial Intelligence Improve Peer Review and Publishing Itself?

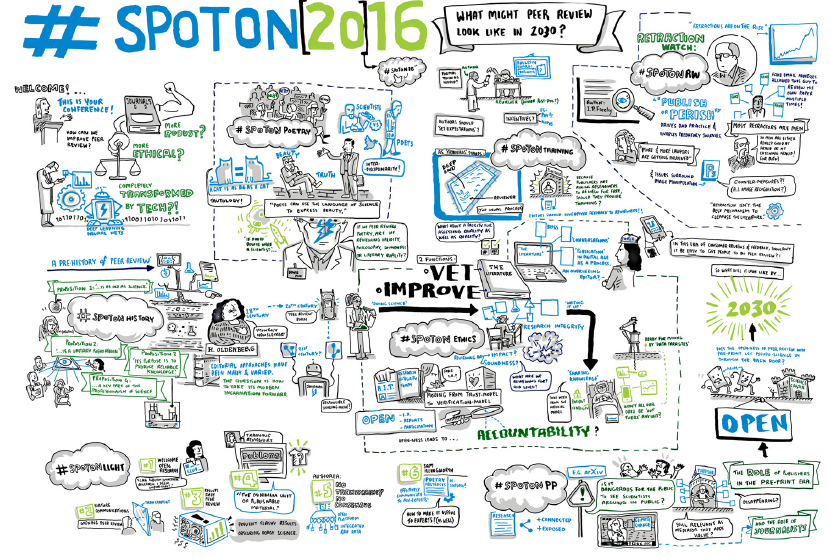

This post is one of six essays that appear in the newly released “SpotOn Report: What might peer review look like in 2030?” from BioMed Central and Digital Science. It is republished published under a CC BY 4.0 license. The full report, and this graphic at full size, can be read and downloaded on Figshare.

Artificial intelligence (AI) is a term that has become popular in many industries, because of the potential of AI to quickly perform tasks that typically require more work by a human. Once thought of as the computer software endgame, the early forms of true AI are now being used to address real world issues.

AI solutions underway

Scientific publishing is already using some of the early AI technologies to address certain issues, for example:

- Identifying new peer reviewers: editorial staff are often responsible for managing their own reviewer lists, which includes finding new reviewers. Smart software can identify new potential reviewers from web sources that editors may not have considered.

- Fighting plagiarism: many of the current plagiarism algorithms match text verbatim. The use of synonyms or paraphrasing can foil these services. However, new software can identify components of whole sentences or paragraphs (much like the human mind would). It could identify and flag papers with similar-sounding paragraphs and sentences.

- Bad reporting: if an author fails to report key information, such as sample size, which editors need to make informed decisions on whether to accept or reject a paper, then editors and reviewers should be made aware of this. New technology can scan the text to ensure all necessary information is reported correctly.

- Bad statistics: if scientists apply inappropriate statistical tests to their data, this can lead to false conclusions. AI can identify the most appropriate test to achieve reliable results.

- Data fabrication: AI can often detect if data has been modified or if new data has been generated with the aim of achieving a desired outcome.

These are just a few of the big challenges that AI is starting to meet. Additional tasks, such as verification of author identities, impact factor prediction, and keyword suggestions are currently being addressed.

At StatReviewer we use what is more strictly defined as Machine Learning to generate statistical and methodological reviews for scientific manuscripts. Machine Learning takes a large body of information and uses it to train software to make identifications. A classic example of this is character recognition: the software is exposed to (i.e. trained on) thousands of variations on the letter A and learns to identify different As in an image.

Machine Learning is thought of as the precursor to true AI. In the not-too-distant future, these budding technologies will blossom into extremely powerful tools that will make many of the things we struggle with today seem trivial.

The full automation quandary

In the future, software will be able to complete subject-oriented reviews of manuscripts. When coupled with an automated methodological review, this would enable a fully automated publishing process – including the decision to publish. This is where a slippery slope gets extra slippery.

On the one hand, automating a process that determines what we value as “good science” has risks and is full of ethical dilemmas. The curation that editors and reviewers at scientific journals provide helps us distill signal from noise in research, and provides an idea of what is “important”. If we dehumanize that process, we need to be wary about what values we allow AI to impart. Vigilance will be necessary.

On the other hand, automated publishing would expedite scientific communication. When the time from submission to publication is measured in milliseconds, researchers can share their findings much faster. Additionally, human bias is removed, making automated publishing an unbiased approach.

In the end, if science marches toward a more “open” paradigm, the ethics of full automation become less tricky because the publishing process no longer determines scientific importance. That will be left to the consumers and aggregators of scientific information.

Why use AI then?

Our current publishing model creates an opportunity for potential predatory journals/publishers to take authors’ money and publish their work without scrutiny. The fact that this happens as frequently as it does, tells us that there is not enough capacity within the publishing community to process the amount of scientific writing that is being generated. AI solutions will help to address this shortage in two ways. First, AI will increase the overall capacity to publish quality works by finding new reviewers, creating automated reviews, etc. Second, using AI technologies will make it possible to take an automated retrospective look at published works and quickly identify organisations that are not fulfilling their obligation to uphold appropriate standards.

Skynet – how much automation is too much?

Today, we already have automated consumers of scientific information (I’ve written some of them), and in the future we will have advanced AI consuming it as well. These “AI consumers” will have the history of science at their disposal. They will take in the newly published information and note how it adds to previous information. Before long, the AI may suggest new experiments to continue research on a given subject. In some industries, experiments are conducted mechanically and they are automatically initiated by the AI…and you can see how this might get out of hand.

The point, at which an unsupervised AI determines the direction of scientific research is one we have to be wary of. True discovery should be an entirely human idea.

does it mean that everything that human being could possibly do would be re-done by the machines? sounds good but does it mean that sooner or later there won’t be any work for humans to do? no jobs?