Why the h-index is a Bogus Measure of Academic Impact

Earlier this year, French physician and microbiologist Didier Raoult generated a media uproar over his controversial promotion of hydroxychloroquine to treat COVID-19. The researcher has long pointed to his growing list of publications and high number of citations as an indication of his contribution to science, all summarized in his “h-index.”

The controversy over his recent research presents an opportunity to examine the weaknesses of the h-index, a metric that aims to quantify a researcher’s productivity and impact, used by many organizations to evaluate researchers for promotions or research project funding.

Invented in 2005 by the American physicist John Hirsch, the Hirsch-index or h-index, is an essential reference for many researchers and managers in the academic world. It is particularly promoted and used in the biomedical sciences, a field where the massive number of publications makes any serious qualitative assessment of researchers’ work almost impossible. This alleged indicator of quality has become a mirror in front of which researchers admire themselves or sneer at the pitiful h-index of their colleagues and rivals.

Although experts in bibliometry — a branch of library and information sciences that uses statistical methods to analyze publications — have quickly pointed out the dubious nature of this composite indicator, most researchers do not always seem to understand that its properties make it a far-from-valid index to seriously and ethically assess the quality or scientific impact of publications.

Promoters of the h-index commit an elementary error of logic. They assert that because Nobel Prize winners generally having a high h-index, the measure is a valid indicator of the individual quality of researchers. However, if a high h-index can indeed be associated with a Nobel Prize winner, this in no way proves that a low h-index is necessarily associated with a researcher of poor standing.

Indeed, a seemingly low h-index can hide a high scientific impact, at least if one accepts that the usual unit of measure for scientific visibility is reflected in the number of citations received.

Limits of the h-index

Defined as the number of articles N by an author that have each received at least N citations, the h-index is limited by the total number of published articles. For instance, if a person has 20 articles that are each cited 100 times, her h-index is 20 — just like a person who also has 20 articles, but each cited only 20 times. But no serious researcher would say that the two are equal because their h-index is the same.

The most ironic in the history of the h-index is that its inventor wanted to counter the claim that the number of published papers represented a researcher’s impact. So, he included the number of citations the articles received.

But it turns out that an author’s h-index is strongly correlated (up to about 0.9) with his total number of publications. In other words, it is the number of publications that drives the index more than the number of citations, an indicator which remains the best measure of the visibility of scientific publications.

All of this is well known to experts in bibliometrics, but perhaps less to researchers, managers and journalists who allow themselves to be impressed by scientists parading their h-index.

Raoult vs. Einstein

In a recent investigation into Raoult’s research activities by the French newspaper Médiapart, a researcher who had been a member of the evaluation committee of Raoult’s laboratory said: “What struck her was Didier Raoult’s obsession with his publications. A few minutes before the evaluation of his unit began, the first thing he showed her on his computer was his h-index.” Raoult had also said in Le Point magazine in 2015 that “it was necessary to count the number and impact of researchers’ publications to assess the quality of their work.”

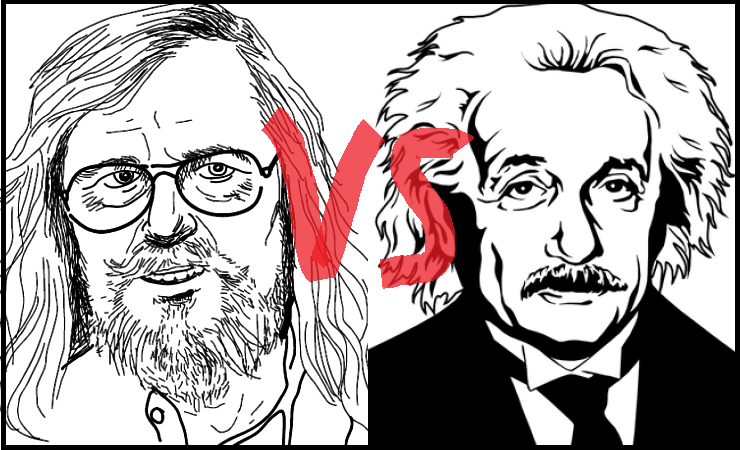

So let’s take a look at Raoult’s h-index and see how it compares to, say, that of a researcher who is considered the greatest scientist of the last century: Albert Einstein.

In the Web of Science database, Raoult has 2,053 articles published between 1979 and 2018, having received a total of 72,847 citations. His h-index calculated from these two numbers is 120. We know, however, that the value of this index can be artificially inflated through author self-citations — when an author cites his own previous papers. The database indicates that among the total citations attributed to the articles co-authored by Raoult, 18,145 come from articles of which he is a co-author. These self-citations amount to a total of 25 per cent. Subtracting these, Raoult’s h-index drops 13 per cent to a value of 104.

Now, let’s examine the case of Einstein, who has 147 articles listed in the Web of Science database between 1901 and 1955, the year of his death. For his 147 articles, Einstein has received 1,564 citations during his lifetime. Of this total number of citations, only 27, or a meagre 1.7 per cent, are self-citations. Now, if we add the citations made to his articles after his death, Einstein has received a total of 28,404 citations between 1901 and 2019, which earns him an h-index of 56.

If we have to rely on the so-called “objective” measurement provided by the h-index, we are then forced to conclude that the work of Raoult has twice the scientific impact of that of Einstein, the father of the photon, restricted and general relativities, the Bose-Einstein condensation and of the phenomenon of the stimulated emission at the origin of lasers.

Or maybe is it simpler (and better) to conclude, as already suggested, that this indicator is bogus?

One should note the significant difference in the number of total citations received by each of these researchers during their careers. They have obviously been active at very different times, and the size of scientific communities, and therefore the number of potential citing authors, have grown considerably over the past half-century.

Disciplinary differences and collaboration patterns must also be taken into account. For example, theoretical physics has far fewer contributors than microbiology, and the number of co-authors per article is smaller, which affects the measure of the productivity and impact of researchers.

Finally, it is important to note that the statement: “The h-index of person P is X,” has no meaning, because the value of the index depends on the content of the database used for its calculation. One should rather say: “The h-index of person P is X, in database Z.”

Hence, according to the Web of Science database, which only contains journals considered to be serious and fairly visible in the scientific field, the h-index of Raoult is 120. On the other hand, in the free and therefore easily accessible database of Google Scholar, his h-index — the one most often repeated in the media — goes up to 179.

Number fetishism

Many scientific communities worship the h-index and this fetishism can have harmful consequences for scientific research. France, for instance, uses a Système d’interrogation, de gestion et d’analyse des publications scientifiques to grant research funds to its biomedical science laboratories. It is based on the number of articles they publish in so-called high impact factor journals. As reported by the newspaper Le Parisien, the frantic pace of Raoult’s publications allows his home institution to earn between 3,600 and 14,400 euros annually for each article published by his team.

Common sense should teach us to be wary of simplistic and one-dimensional indicators. Slowing the maddening pace of scientific publications would certainly lead researchers to lose interest in the h-index. More importantly, abandoning it would contribute to producing scientific papers that will be fewer in number, but certainly more robust.