Rapeglish: A Program that Spits Out Hate — For the Greater Good

Ed. note – This article cites some of the hate speech found online, and those sensitive to explicit language should proceed with that in mind.

Ed. note – This article cites some of the hate speech found online, and those sensitive to explicit language should proceed with that in mind.

As an academic researching gendered cyberhate, there are days when it seems like the internet is made of rape threats. It might seem odd, therefore, that I have just added another 80 billion new misogynist messages to the mix in the form of a Random Rape Threat Generator.

Allow me to explain.

The Random Rape Threat Generator (let’s call it the “RRTG” for short) is made up of hundreds of actual examples of gendered cyberhate which I have archived over 18 years of research and which are analysed in my new book, Misogyny Online: A Short (and Brutish) History.

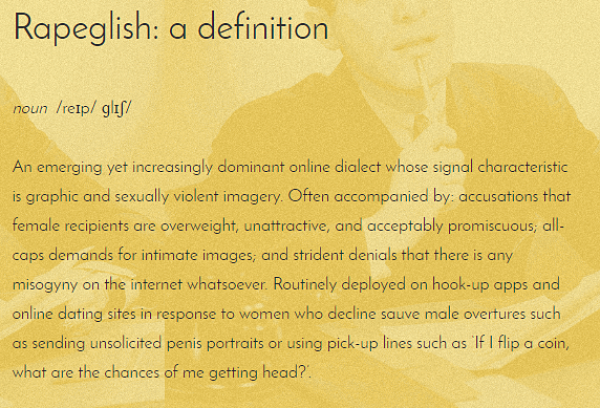

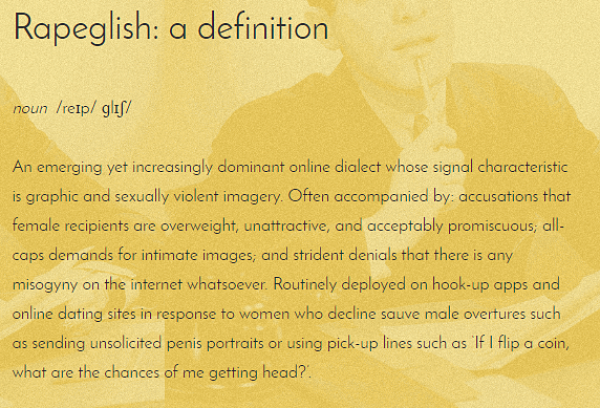

The RRTG is based on a table from my book which demonstrates the formulaic nature of what I call “Rapeglish.” It is a computer program that slices up and shuffles around an archive of sexualized vitriol, rape threats, and aggressive sleaze received by real-life women in order to show that the parts of these messages are interchangeable – as is the hateful gibberish that results.

The RRTG is based on a table from my book which demonstrates the formulaic nature of what I call “Rapeglish.” It is a computer program that slices up and shuffles around an archive of sexualized vitriol, rape threats, and aggressive sleaze received by real-life women in order to show that the parts of these messages are interchangeable – as is the hateful gibberish that results.

The generator exposes the quasi-algebraic quality of Rapeglish in that we can see that the names of senders and receivers – as well as textual elements such as propositional content, adjectives, nouns, and signoffs – can be substituted endlessly without changing the basic structures or take-home messages of the texts.

Based on its current input data, the generator has the ability to produce more than 80 billion unique rape threats, abusive texts, and angry demands for sex: that is, more than 23 individual messages for every woman on earth.

While this may seem like a large number, it’s not inconceivable that we may soon reach a similar figure in the real world – if, indeed, we’re not there already.

In 2015, the United Nations released a report stating that cyber violence against women and girls had become so ubiquitous it risked producing a 21st century “global pandemic.”

The UN noted that 73 percent of women and girls have been exposed to or have experienced some form of online violence, that women are 27 times more likely to be abused online than men, and that 61 percent of online harassers are male.

Furthermore, these attacks don’t occur infrequently, but often flood women’s computers and mobile devices in tsunami-grade waves.

During the 2014 attacks on women dubbed “GamerGate,” the games developer Zoë Quinn reportedly accumulated 16 gigabytes of abuse. In 2016, Jess Phillips, the British Labour MP who helped launch a campaign against misogynist bullying, reported receiving 600 rape threats in just a single evening.

I personally began collecting examples of Rapeglish in 1998 when I was working as a journalist in the Australian print media and started receiving rapey emails from disgruntled male readers. “All feminists should be gangraped to set them right” was a typical example.

My early thoughts were that while the advent of electronic communication was allowing these sentiments to be expressed with ease and anonymity, it did not create them.

Rather, the combination of disgust and desire identifiable in Rapeglish seemed to speak to something far older about patriarchal attitudes to women, to female sexuality, and to actual or perceived female power.

As time passed, my journalistic interest in the topic led to a PhD, a series of formal academic research projects, and now a new book.

In Misogyny Online: A Short (and Brutish) History, I track the evolution of Rapeglish from a sub-cultural dialect in the fringes of the early internet, to what is often the mainstream online lingua franca.

Over time, cyberhate has become more prevalent, noxious, plausibly threatening, and starkly gendered in nature. One constant, however, is the Campbell’s Soup can qualities of the abuse.

What struck me, after talking to many women who have been the targets of gendered cyberhate, is that after a while of staring at their hateful messages, they started to look virtually indistinguishable.

Specifically, almost identical messages are received by women regardless of what behavior apparently “provokes” the attack. The content bears little relation to what the woman did, and what the woman did is often completely unrelated to that content (for example, she is threatened with gang rape for expressing an opinion about cricket).

It is also revealing that the “you look like a tart desperate for cock” emails I was receiving as a feminist humor columnist in the late 1990s are all but identical to the “you’ll cry rape when you get what you’ve asked for” messages being sent to a Catholic blogger on the other side of the world more than a decade later.

While gendered cyberhate now receives a great deal of international media coverage, initially I struggled to find academic venues willing to publish my research.

No doubt this was partly due to my difficulties in making the gear shift from journalistic to academic writing (I still wish scholarly journals didn’t have such an anaphylactic allergic reaction to jokes).

Yet I have also made the case that, at least up until recently, there has been an academic tendency to underplay, overlook, ignore, or otherwise marginalize the prevalence, gendered nature, and serious ethical and material ramifications of hostility online.

For instance, an early article I submitted to a highly ranked international journal in the field was rejected partly because an anonymous reviewer suggested that perhaps internet abuse was not being taken seriously because it was poor writing and/or contained spelling and grammatical errors and therefore lacked credibility.

On another, similar occasion, I was chastised about and advised to remove (for taste-related reasons) explicit examples of gendered cyberhate from a piece of academic writing. This was from a reader who also questioned my overall argument about there being a misogyny problem online on the grounds that he himself had not noticed any misogyny on the internet.

It was frustrating – and I think indicative of a larger scholarly phenomenon – that this particular skeptic did not wish to be exposed to the very evidence that might have supported my thesis.

The point of the above anecdotal examples is that another important aim of the RRTG is to help raise awareness about the gendered cyberhate problem by providing a publicly accessible archive for use by interested researchers, teachers, students, and activists, as well as the general public.

To a certain extent, I also want academics such as the two reviewers I mention above to have the opportunity to experience what it is like to be suddenly yelled at in Rapeglish.

My intention is not to cause upset or be gratuitously provocative, but simply to show that receiving a Tweet from a man who says he is coming to your home to stick a combat knife into your vagina can be profoundly unsettling even if he misspells the weapon as a “K-bar” instead of a “ka-bar”.

To maximize its potential pedagogical value for those teaching or studying in fields such as gender studies, digital humanities, and game studies, I have provided access to the generator’s complete input data sets.

This is made available alongside detailed information about how the generator was constructed. To cut a long coding story very short, my colleague Nicole A Vincent and I built the RRTG by taking hundreds of real-life examples of Rapeglish from my research archives and parsing them into categories.

The generator then randomly spins a number of input data sets poker machine-style to produce messages such as:

“My cousin will come and slit your throat and rape you, you obese slut” and “Gag on my rod, you ugly attention-whore.”

Users then have the option of clicking a button reading “The messages I get are rapier than that” to generate further examples.

To demonstrate the tenuous or nonexistent connection between the content of the violent material sent, and the identity and context of the receiver, a more complex, “extended remix” of the RRTG is offered.

This shows the way women are being called diseased whores for having opinions on East Asian economic policy, and the way girls are being threatened with rape for posting videos about fishtail braiding.

It makes no sense until you realize that while the cyber medium may be new, the misogynist messages belong to a far older tradition of gendered abuse and oppression.

This, in other words, is not really about individual women.

It is about gender.