When Talking Broader Impact, Which Websites Do We Value?

What can you cite to prove that your research has had non-academic impacts? In the UK this question has a special significance, as academics’ answers are peer reviewed and linked to research funding. However, fortunately, the results from this exercise (the 2014 national evaluation, the Research Excellence Framework or REF) are also published online, so anyone can see how researchers in all fields have answered this question. And as part of a recent study this is exactly what we did, by examining the URLs used to evidence non-academic impacts to find the types of sources cited in each broad discipline and looking for any unexpected patterns.

For those unfamiliar with the REF, the quality of the research from UK academic institutions is periodically assessed, with the outcomes directing the block grant component of research funding. Whilst most of the assessment covers outputs, such as journal articles and monographs, 25 percent of the score derives from a series of impact case studies submitted by each Unit of Assessment (UoA: e.g., a department, school, or research group). These are evidence-based narrative claims that the submitting unit has had specific non-academic impacts, such as on health, government, industry, or culture.

Each REF case study is backed by references to the academic research that underpinned the impact and by references to evidence that the impact occurred. The latter differ from typical academic references because their role is not to acknowledge or draw upon prior research but to demonstrate that an impact has occurred. These sources seem particularly difficult to identify, but collectively give evidence about the nature of the impact.

We found 29,830 URLs cited in the 6,637 REF 2014 impact case studies. These provided evidence to identify the types of websites found useful in case studies and by collating them separately by Unit of Assessment, disciplinary differences. These URLs were extracted from the “Sources to corroborate the impact” section to focus on this type of reference.

We wanted to classify the types of webpages for all 29,830 URLs but there were too many to check individually. Instead, we semi-automatically classified them by creating a list of common websites from a variety of online directories and then matching the URLs against these websites. We then manually checked the classifications of the 20 most cited URLs for each UoA. This gave a likely classification of 88 percent of the URLs, with the remainder being ignored. Unclassified websites were more common in some areas, such as Engineering, than others. They often seemed to be related to impacts on small or medium sized organizations.

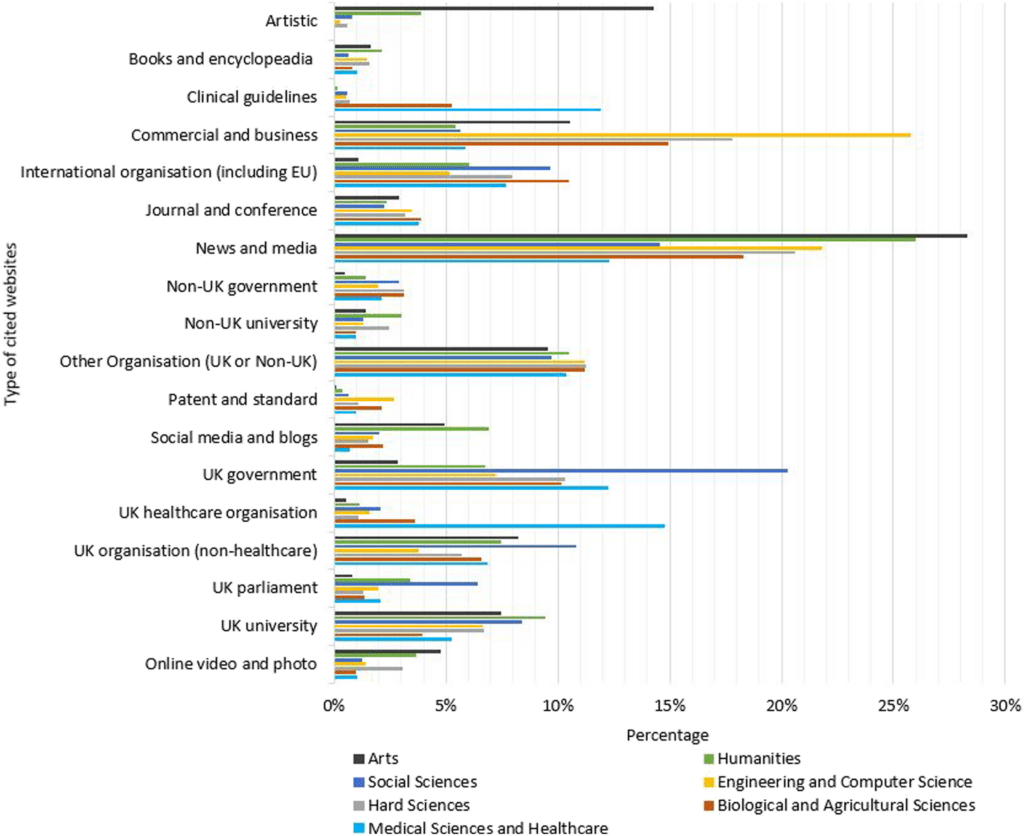

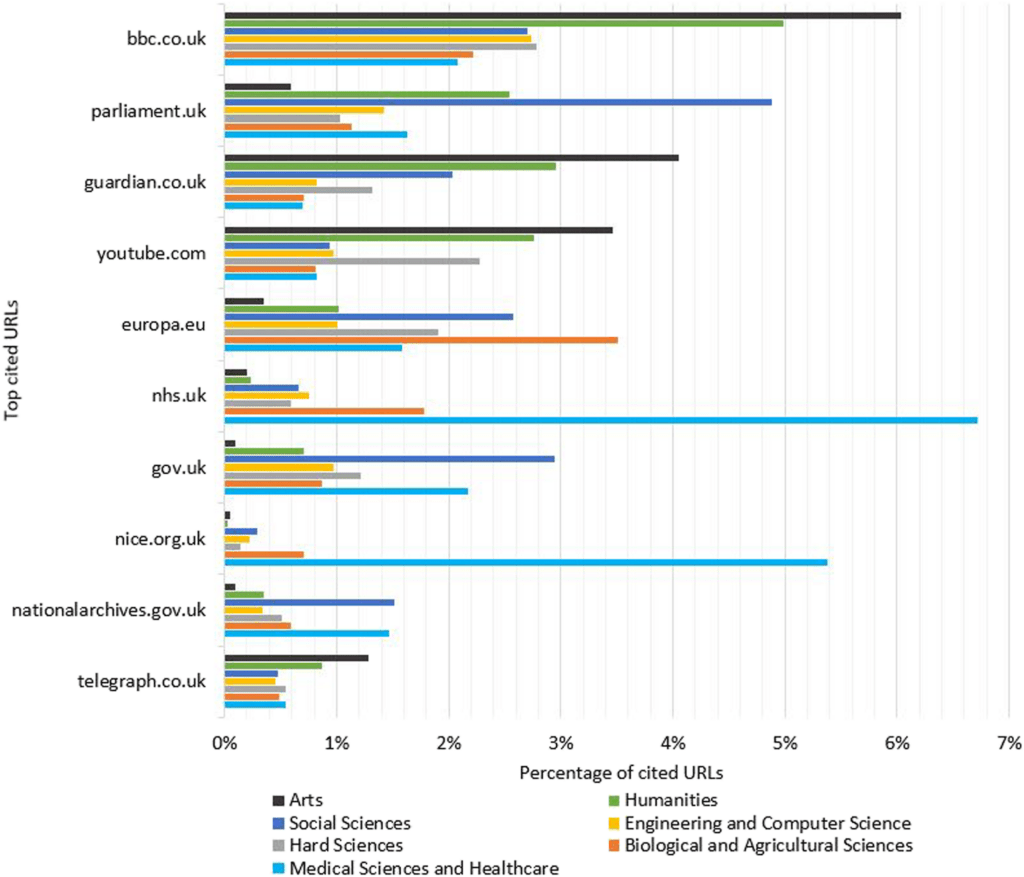

The results (Figure 1) show that business, news, and government websites are generally useful to corroborate impact in all broad disciplines. Although it would have been difficult to predict this graph in advance, there are no obvious surprises. Whereas business and government websites are presumably cited primarily as places where the impact happened, news websites might either be the form of the impact (e.g., publicity for cultural dissemination) or independent evidence that the impact occurred (e.g., a news report of an impact). There are disciplinary differences, with the social sciences seeming to have more impact on government than all other areas of research, the Arts and Humanities drawing upon news sources the most, and engineering/computer science impacting on business most frequently. The arts also relatively frequently show the impact occurring, in the sense of videos of performances that created the claimed cultural impact, or with the videos themselves generating the impact by explaining the work (e.g., the English Language and Literature UoA from the University of Huddersfield’s “Jessica Malay and Lady Anne Clifford’s Great Books of Record”). Public engagement impact could also be demonstrated as occurring through YouTube popular science videos in the physical sciences (e.g., in the Physics UoA from the University of Nottingham, “Communicating Research to the Public through YouTube”). Figure 2 shows the 10 websites with the most citations from all impact case studies, including the BBC, Guardian, YouTube, European Union, UK government, and UK parliament websites.

Unsurprisingly, there are also field-specific targets of impact: Artistic websites primarily cited by artistic UoAs, and UK healthcare organizations and clinical guidelines primarily cited by biomedical and health-related UoAs. Despite this, there is no type of website cited only by one type of UoA, showing that impacts from all areas occasionally occur within the natural remit of other UoAs.

In the social sciences, the web domains of UK governments (including parliament) seem to be the natural targets, although international organizations are also frequent targets. The types of webpages cited includes press releases, reports, regulations, statistics, policies, guidelines, analyses, and white papers. For example, education researchers might cite an education policy paper as evidence of their influence on policy and sociologists might cite a government report about domestic abuse that they had influenced.

From an international perspective, the UK REF impact case studies describe impacts that are heavily UK-focused. For example, several of the categories are UK-only and UK government websites are cited far more often than the websites of all other governments combined. Since impacts are allowed to occur anywhere in the world for the REF without penalty, this is perhaps surprising, although it suggests a much greater difference than the geographic location section of the REF2014 impact case studies (REF2014, 2015), so general sections (e.g., business) may be more international. It is also possible that impact case studies are often for research projects funded within the UK, and hence tending to focus on UK-specific goals. It is also possible that non-academics look for local expertise to help them solve complex problems, which limits the opportunities for international impact.

The results illustrate the types of online evidence used for the impacts claimed in the previous REF. This may help UK researchers and university impact officers to recognize and describe the wider impacts of their research in future research assessment exercises. For example, it suggests the types of websites that are “natural” sources of impact evidence for different fields and that should therefore be examined carefully for useful evidence.