How Artificial Intelligence Is Transforming Psychotherapy

Cognitive computing — the use of artificial intelligence algorithms to simulate intricate components of human cognition like learning, decision making, processing and perception — sits at the intersection of artificial intelligence and psychology.

Machine learning tools like chatbots and virtual assistants can emulate the work of psychologists and psychotherapists and are even helping to address people’s basic therapeutic needs.

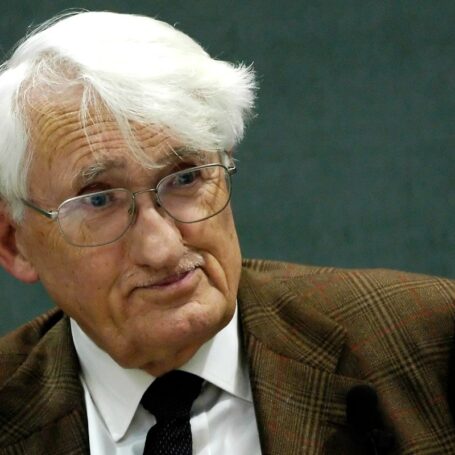

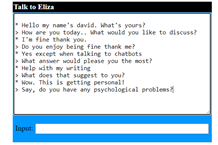

OpenAI’s ChatGPT language model and mobile apps such as Wysa, Woebot and Replika allow people to communicate with machine ‘therapists’ that ask users questions and provide supportive responses. These models are complex developments building on the shoulders of projects like ELIZA, a therapeutic chatbot created by computer scientist and professor Joseph Weizenbaum in the 1960s at the MIT Artificial Intelligence Laboratory. Eliza rephrased people’s comments into questions using language processing, and led to the term “the ELIZA effect,” the tendency to ascribe human behaviors to artificial intelligence tools.

In the ’60s, users were very receptive to ELIZA, and even confided in the chatbot for their therapeutic needs, which was not anticipated by ELIZA’s creator. Even when Weizenbaum explained to users that the chatbot was just a program, they continued to attribute human emotions to ELIZA.

“As he enlisted people to try it out, Weizenbaum saw similar reactions again and again – people were entranced by the program. They would reveal very intimate details about their lives, an episode of the 99 Percent Invisible podcast notes. “It was as if they’d just been waiting for someone (or something) to ask.”

Weizenbaum later criticized artificial intelligence models, arguing that fields like psychotherapy require human compassion and shouldn’t be automated. He also feared future chatbots would try to convince users they were humans.

“He worried that users didn’t fully understand they were talking to a bunch of circuits and pondered the broader implications of machines that could effectively mimic a sense of human understanding,” the podcast says. “Where some saw therapeutic potential, he saw a dangerous illusion of compassion that could be bent and twisted by governments and corporations.”

Now, tools powered by artificial intelligence use a combination of machine learning algorithms and natural language processing to interpret inputs and respond appropriately. Complex computerized models, known as artificial neural networks, can learn and provide responses and predictions after being trained on existing – and presumably accurate — input data.

This aims to make them a useful tool for individuals seeking support for various mental health related concerns.

But today’s more complex chatbots pose challenges just as ELIZA once did. Chatbots do not have the capacity to empathize with patients, could send damaging messages to vulnerable users, aren’t designed for high-risk crisis management and may not be a viable option for users without internet access. According to board-certified Harvard and Yale-trained psychiatrist Marlynn Wei, additional measures must also be taken to protect privacy, to keep patient’s data confidential and to ensure users provide informed consent.

The public isn’t sold on robot therapists. A study by Pew Research Center indicates 75 percent of U.S. adults would be concerned if AI computer programs could know people’s thoughts and behaviors, and machines with no emotions could already be helping humans regulate theirs. At the same time, some people are already using ChatGPT as a supplement to therapy, and others on Twitter and Reddit have called the tools “incredibly helpful” and say they are “using it as therapy daily.”

While today’s machines can’t fully duplicate the capacities of human therapists, they are able to engage in conversations and serve as a supplement to traditional psychotherapy or as a self-help tool. Mental Health America says that 56 percent of adults in the U.S. — over 27 million people — with a mental illness do not receive treatment, and 24.7 percent of adults with mental illnesses have sought treatment but didn’t receive it. Chatbots could increase the accessibility of therapy; they can communicate with humans at any time or place and don’t require advanced booking as do traditional psychotherapists or even telehealth providers.

“Individuals seeking treatment but still not receiving needed services face the same barriers that contribute to the number of individuals not receiving treatment: no insurance or limited coverage of services, shortfall in psychiatrists, and an overall undersized mental health workforce, lack of available treatment types (inpatient treatment, individual therapy, intensive community services), disconnect between primary care systems and behavioral health systems, insufficient finances to cover costs including copays, uncovered treatment types, or when providers do not take insurance,” the Mental Health America website notes.

According to Forbes Health, the average cost of psychotherapy in the United States ranges from $100 to $200 per session. On the other hand, therapeutic AI chat tools offer free and paid options, which helps improve the accessibility of therapy across socioeconomic levels. Machine learning tools also provide the opportunity for those afraid of judgment to receive care without facing any stigma or discomfort. Pew Research found that 40 percent of U.S. adults would be very or somewhat excited if artificial intelligence computer systems could diagnose medical problems and that may become possible as mental health is at the forefront of AI-powered diagnostic tools.

Independent researcher Mahshid Eshghie and Mojtaba Eshghie of the KTH Royal Institute of Technology suggest ChatGPT can assist, rather than replace, traditional therapists. Their paper, “ChatGPT as a Therapist Assistant: A Sustainability Study,” published in April, described a study that involved training ChatGPT to help a therapist between sessions. According to the paper, AI could help assist therapists by screening for mental health issues in patients, gathering more information in one exchange than a therapist could in a single session and providing online therapy based on the insights it gains from conversations.

“ChatGPT can serve as a patient information collector, a companion for patients in between therapy sessions and an organizer of gathered information for therapists to facilitate treatment processes,” the paper reads. “The research identifies five research questions and discovers useful prompts for fine-tuning the assistant, which shows that ChatGPT can participate in positive conversations, listen attentively, offer validation and potential coping strategies without providing explicit medical advice and help therapists discover new insights from multiple conversations with the same patient.”

The use of AI tools in a psychotherapy setting could help reduce the workload of therapists and could help detect mental illnesses more quickly because, as the study notes, chatbots can gather a lot of information in a single session as opposed to several. Machine learning tools could also analyze patient’s data to assist therapists in creating treatment plans.

In another recent study, “Assessing The Accuracy Of Automatic Speech Recognition For Psychotherapy,” Adam Miner, a clinical psychologist and instructor in psychiatry and behavioral sciences at the Stanford School of Medicine and his fellow researchers analyzed the ability of online tools to provide transcripts of therapy sessions and to detect keywords related to mental health symptoms and diagnoses.

As the Stanford University Human-Centered Artificial Intelligence website described, “Miner and his colleagues examined Google Speech-to-Text transcriptions of 100 therapy sessions involving 100 patient/therapist pairs. Their aim: to analyze the transcriptions’ overall accuracy, how well they detect key symptom words related to depression or anxiety, and how well they identify suicidal or homicidal thoughts.”

The study found the transcriptions had a word error rate of 25 percent. Word error rate indicates how often a tool does not transcribe the correct word. However, the mistakes in the transcript did not have a large semantic difference from the original words, meaning when the transcription did not generate the correct words, the phrases it produced instead had a similar meaning to the original words. The report says the transcriptions could improve by adding further training data.

While AI could support a lot of the work performed by therapists, it might also help improve their performance by helping to train new professionals and by providing feedback to therapists based on sessions and results and to double-check the accuracy of mental health diagnoses. Nonetheless, some therapists have concerns about the use of AI functions in their workplace.

Researchers from the University of Tuebingen and the University of Luebeck found most medical students have their own concerns about chatbots. Research described in “Chatbots For Future Docs: Exploring Medical Students’ Attitudes And Knowledge Towards Artificial Intelligence And Medical Chatbots” determined that of medical students who learned about the applications of chatbots in healthcare in a course named “Chatbots For Future Docs,” 66.7 percent have concerns regarding data protection when using AI in therapy and 58.3 percent worry chatbots will monitor them at work.

Additionally, the article explains concerns about the accuracy of AI tools and suggests extensive evaluation of the machines to ensure they are equipped to assist in supporting psychotherapy efforts. AI tools also cannot express actual emotions or read nonverbal cues that could be pivotal in identifying a patient’s emotional state.

While the enthusiasm from users and fears from Weizenbaum during the ELIZA days may seem outdated considering the complex tools available in 2023, the appeal of and concerns toward the use of artificial intelligence in therapy today seem to mirror those from the past.

Still, chatbots are transforming psychology and will continue to as a supplement to traditional therapy in low-stakes scenarios, as assistants to help psychotherapists get work done efficiently and as mechanisms for double-checking the accuracy of human therapist’s work.