Can Twitter Serve as a Tripwire for Problematic Research?

Publications that are based on wrong data, methodological mistakes, or contain other types of severe errors can spoil the scientific record if they are not retracted. Retraction of publications is one of the effective ways to correct the scientific record. However, before a problematic publication can be retracted, the problem has to be found and brought to the attention of the people involved (the authors of the publication and editors of the journal). The earlier a problem with a published paper is detected, the earlier the publication can be retracted and the less wasted effort goes into new research that is based on disinformation within the scientific record. Therefore, it would be advantageous to have an early warning system that spots potential problems with published papers, or maybe even before based on a preprint version.

One candidate for such a system is Twitter. In a recent case study, we tested the suitability of Twitter data for being a part in an early warning system to spot problems with publications. We analyzed the meta data of tweets that mentioned three retracted publications related to SARS-CoV-2/COVID-19 and their retraction notices. We selected these three publications from the Retraction Watch post about retracted SARS-CoV-2/COVID-19 papers (https://retractionwatch.com/retracted-coronavirus-covid-19-papers/) because they all have a DOI for the publication and the retraction notice and the dates of publication and retraction are at least two weeks apart. We looked at the following studies:

Bae, et al. (2020a) studied the effectiveness of surgical and cotton masks in blocking the virus SARS–CoV-2. The study was published on April 6, 2020 and retracted on June 2, 2020 because they “had not fully recognized the concept of limit of detection (LOD) of the in-house reverse transcriptase polymerase chain reaction used in the study” (Bae, et al., 2020b). In this case, the retraction was made because of a methodological error that was not detected in the peer-review process.

Wang, et al. (2020a) reported that “SARS-CoV-2 infects T lymphocytes through its spike protein-mediated membrane fusion.” The peer review process was very fast: The paper has been submitted on March 21, 2020 and accepted three days later on March 24, 2020. This paper has been published on April 7, 2020, and retracted on July 10, 2020 (Wang, et al., 2020a) because “[a]fter the publication of this article, it came to the authors attention that in order to support the conclusions of the study, the authors should have used primary T cells instead of T-cell lines. In addition, there are concerns that the flow cytometry methodology applied here was flawed. These points resulted in the conclusions being considered invalid.” In this case, the retraction was made because of methodological errors that were not discovered during the peer-review process.

Probably the most attention among the three publications was drawn to the study by Mehra, Desai, Ruschitzka, and Patel (2020). They reported that they could not confirm a benefit in COVID-19 treatment with hydroxychloroquine. They even reported that hydroxychloroquine increases the risk of complications during medical treatment against COVID-19. The study was published on May 22, 2020, and retracted on June 5, 2020, because “several concerns were raised with respect to the veracity of the data and analyses conducted by Surgisphere Corporation and its founder” and co-author of the study. Surgisphere declined to transfer the full dataset to an independent third-party peer reviewer because that would violate client agreements and confidentiality requirements. Potential benefit or risk of hydroxychloroquine for treatment of COVID-19 is still not clear. In this case, the retraction was made because of doubts regarding the validity of the employed data that was not discovered in the peer-review process.

We downloaded the meta data of the tweets in August 2020. All three retracted publications received rather high numbers of tweets (between 3,095 and 42,746). An analysis of the profile descriptions of the Twitter users shows that most tweets originated from personal accounts, faculty members and students, or professionals. Tweets from bots represented a small minority. Therefore, we can expect informative content from the tweet texts.

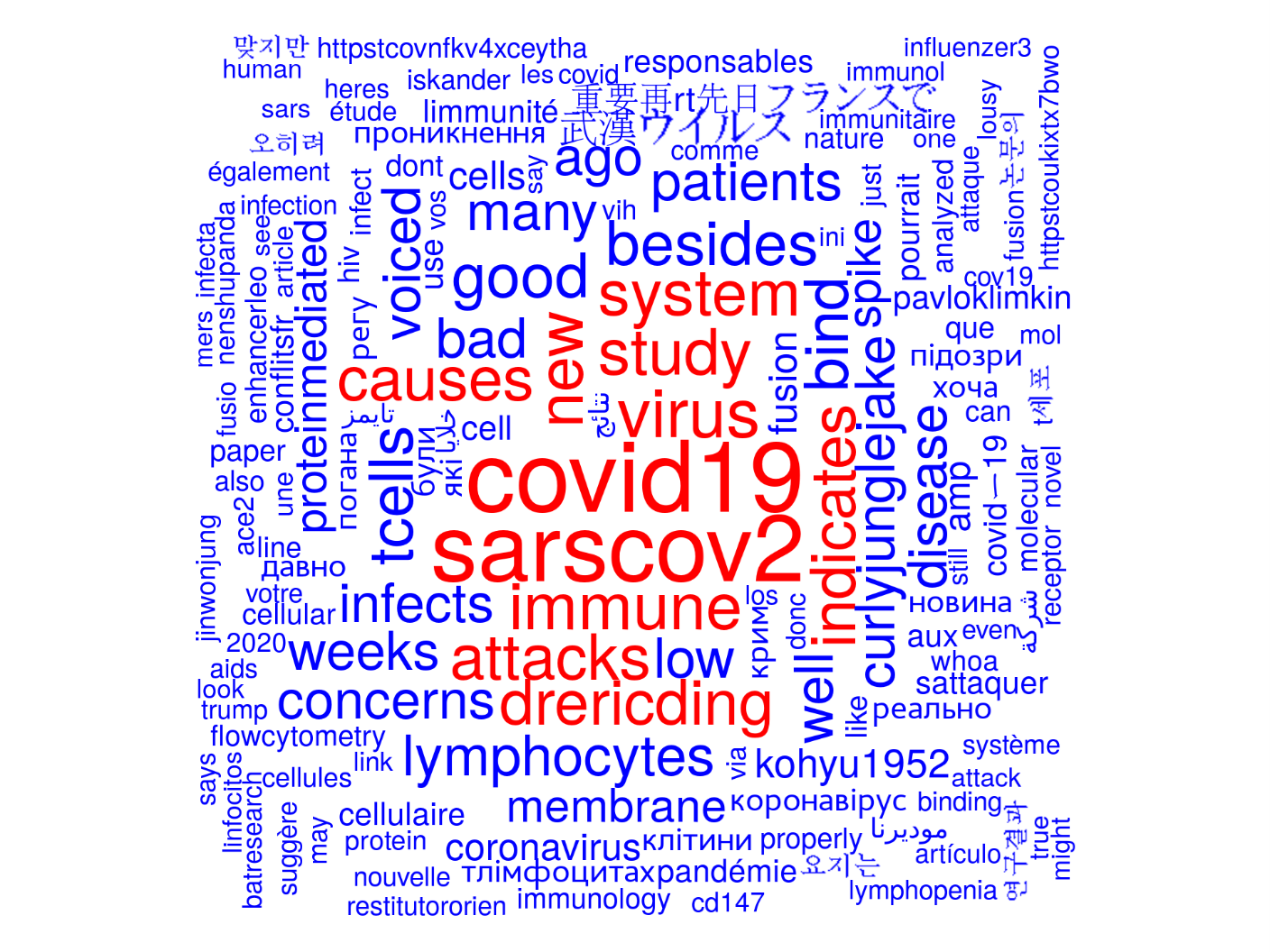

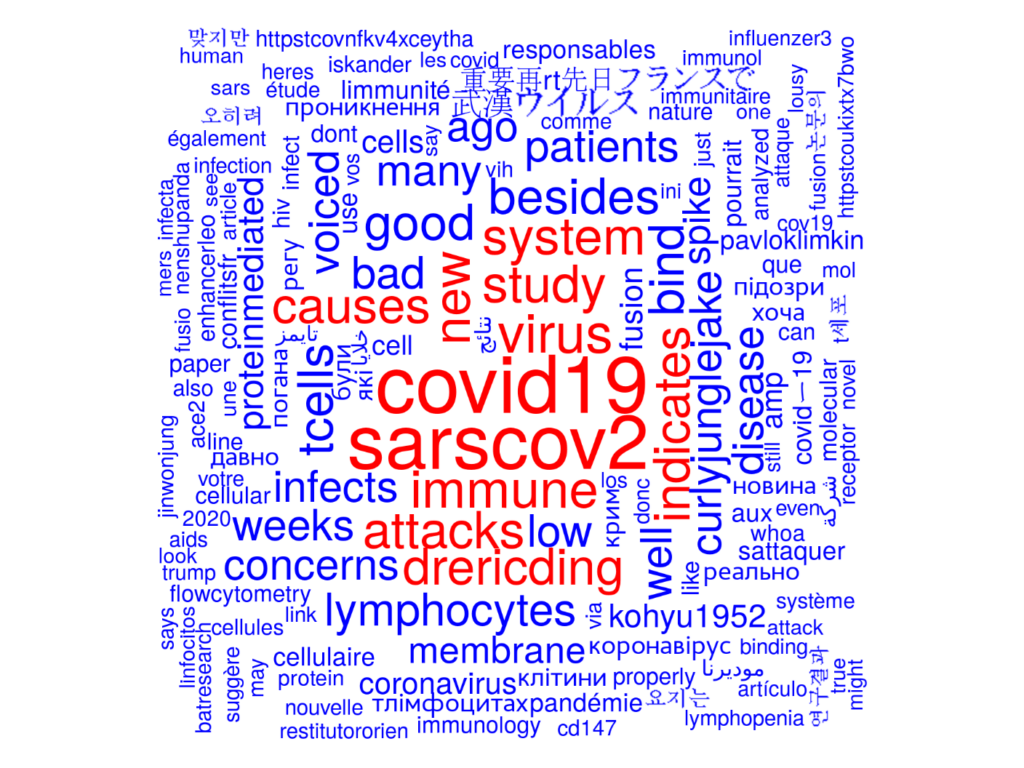

We produced word clouds (see for example the word cloud regarding Wang, et al. (2020a) before its retraction) for each retracted publication and their retraction notices before and after retraction. We also performed searches in the tweet texts for phrases related to the retraction reasons (e.g., ‘LOD,’ ‘limit of detection,’ ‘flow cytometry,’ ‘Surgisphere,’ and ‘data’). Inspection of the word clouds did not provide additional phrases than those taken from the retraction notices. A manual inspection of the tweet texts that contain these phrases revealed that these tweets indeed mentioned the problems of the retracted papers before the retraction date in the case of two of the three publications in our case study. Our results indicate that some studies are indeed robustly discussed by experts on Twitter.

These findings lead to the conclusion that Twitter data might be helpful for spotting potential problems with publications. However, an early warning system that uses Twitter data and maybe other sources from the internet can only provide hints to potential problems. Possible problematic cases have to be carefully evaluated by experts in the field. The closed peer review organized by many journals might not be enough to prevent errors in published research articles. A more open peer review might help to prevent such errors in the first place, and post-publication peer review forums might help to correct the scientific record. Our findings are based on a very small sample and manually chosen search phrases. Further studies based on larger publication sets using a consolidated set of search phrases should be conducted to see whether our encouraging results can be confirmed or not. It might also be valuable to include other data sources than Twitter (e.g., discussions on post-publication peer-review sites, such as PubPeer) for follow-up studies.

This post draws on the authors’ article, “Can tweets be used to detect problems early with scientific papers? A case study of three retracted COVID-19/SARS-CoV-2 papers,” published in Scientometrics.