From ‘Which Database?’ to ‘Under What Conditions?’: Teaching Critical Thinking Through Search Tool Selection in an AI Age

A few years ago, if you asked students where they began their research, the answer was predictable: “Google” or “Google Scholar.” Today, it is just as likely to be “ChatGPT.”

But if you ask them why they chose that tool—why this platform rather than another—the room grows quieter.

As an academic librarian, I have come to believe that this “why” question is where critical thinking either begins or quietly disappears. In an information ecosystem saturated with AI systems, discovery layers, preprint servers, and disciplinary databases, the central intellectual task is no longer access. It is judgment.

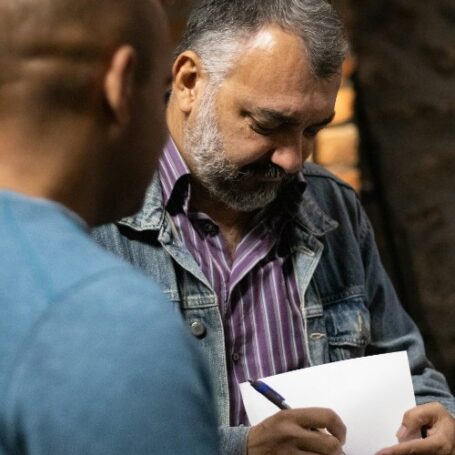

In my information literacy sessions, often one-shot workshops embedded in engineering and interdisciplinary courses, I use a hands-on activity titled “Which Search Tool Should I Use? Choosing Research Tools Based on Research Conditions.” On the surface, it looks like a practical worksheet about databases. In reality, it is a structured exercise in critical thinking.

The shift is simple but powerful: instead of asking students where to search, I ask them to analyze the research situation first.

Moving Beyond Tool Demonstrations

Traditional library instruction often centers on demonstration. We show students Web of Science, Scopus, or Google Scholar. We teach keyword strategies and filters. These skills matter.

But demonstration alone can unintentionally reinforce passive thinking. Students may leave believing that expertise means knowing the “right” database rather than understanding why a tool fits a particular research need.

Critical thinking begins when we make those assumptions visible.

So I redesigned the session around conditions rather than platforms.

Step 1: Identifying Research Conditions

The activity begins with a concrete topic—for example, examining artificial intelligence in signal processing for 6G networks. Instead of opening a database, students work in groups to identify key research conditions:

- Are we exploring the topic or conducting a focused literature review?

- Do we need peer-reviewed sources?

- How recent must the research be?

- Is disciplinary specificity important?

- Are there assignment restrictions on AI use?

- Are we under time constraints?

This exercise reframes information discovery as situational rather than procedural. Students analyze the structure of the problem before reaching for a solution. They begin to see that tool choice is conditional—not universal.

Step 2: Evaluating Tools in Context

Next, students evaluate different search tools—GenAI tools, library discovery platforms, discipline-specific databases like IEEE Xplore, and Google Scholar—by answering two questions:

- When is this tool strongest?

- When is it limited or inappropriate?

The conversation quickly moves beyond comparing interfaces. Students recognize that AI tools may help generate terminology or refine research questions, but they also confront limitations: opaque training data, hallucinated citations, uneven coverage of peer-reviewed literature, and embedded biases.

They note that IEEE Xplore excels for discipline-specific scholarship but may be too narrow for broader inquiries.

Instead of labeling tools as good or bad, students learn to assess them based on purpose and evidence. They practice comparative judgment rather than default preference.

In an AI-saturated environment, the question shifts from “Should I use AI?” to “Under what research conditions does AI meaningfully support learning—and when does it undermine it?”

Step 3: Designing a Strategic Sequence

In the final stage, students design a research sequence rather than choosing one “best” tool. For example:

- Use an AI tool to clarify terminology and refine keywords.

- Move to a discipline-specific database for peer-reviewed research.

- Use citation tracking to map scholarly conversations.

This sequencing turns tool selection into synthesis. It demands metacognition: Why this order? What does each tool contribute? When should I switch strategies?

On the surface, this looks like a database workshop. Beneath it, students are practicing analysis, evaluation, inference, and judgment. They are shifting from convenience-driven searching to condition-driven reasoning.

The Library’s Role in Raising the Cognitive Bar

Academic libraries are uniquely positioned to model this form of critical thinking. We mediate between publishers, repositories, AI systems, and disciplinary databases. We see how infrastructures shape inquiry.

Our role is not merely to provide access. It is to cultivate research judgment: the capacity to select, combine, and critique information systems based on context and purpose.

Critical thinking is often taught as abstraction—discussed in theory but rarely practiced in routine decisions. Search tool selection, by contrast, is a recurring academic act. When we redesign that moment to foreground conditions and reasoning, we transform an ordinary task into an intellectual habit.

The tools will continue to evolve. AI interfaces will become more seamless. Search algorithms will grow more sophisticated.

What must not disappear is the pause before the query:

Under what conditions am I searching—and why does this tool make sense here?